info

¿Necesitas un servidor para esta guía? Ofrecemos servidores dedicados y VPS en más de 50 países con configuración instantánea.

¿Necesitas un VPS para esta guía?

Explore otras opciones de servidores dedicados en

Optimizing I/O Performance on Linux with io_uring for High-Load Applications

TL;DR

- io_uring is a modern asynchronous I/O interface for the Linux kernel, unifying disk, network, and file operations, significantly outperforming traditional methods in high-load scenarios.

- It eliminates system call overhead and context switches by using ring buffers for data exchange between the kernel and user space.

- Key advantages: reduced latency, increased throughput, significant decrease in CPU utilization for I/O-intensive tasks.

- Relevant for 2026 as the de facto standard for high-performance databases, proxy servers, game servers, and any applications with extreme I/O requirements.

- Requires a deep understanding of low-level programming and Linux kernel specifics, but provides unprecedented control and efficiency.

- It is recommended to start using io_uring with Linux kernel 5.10+, optimally with versions 6.x for maximum stability and functionality.

- Economically beneficial due to reduced hardware requirements and optimized cloud costs, despite higher initial development costs.

1. Introduction

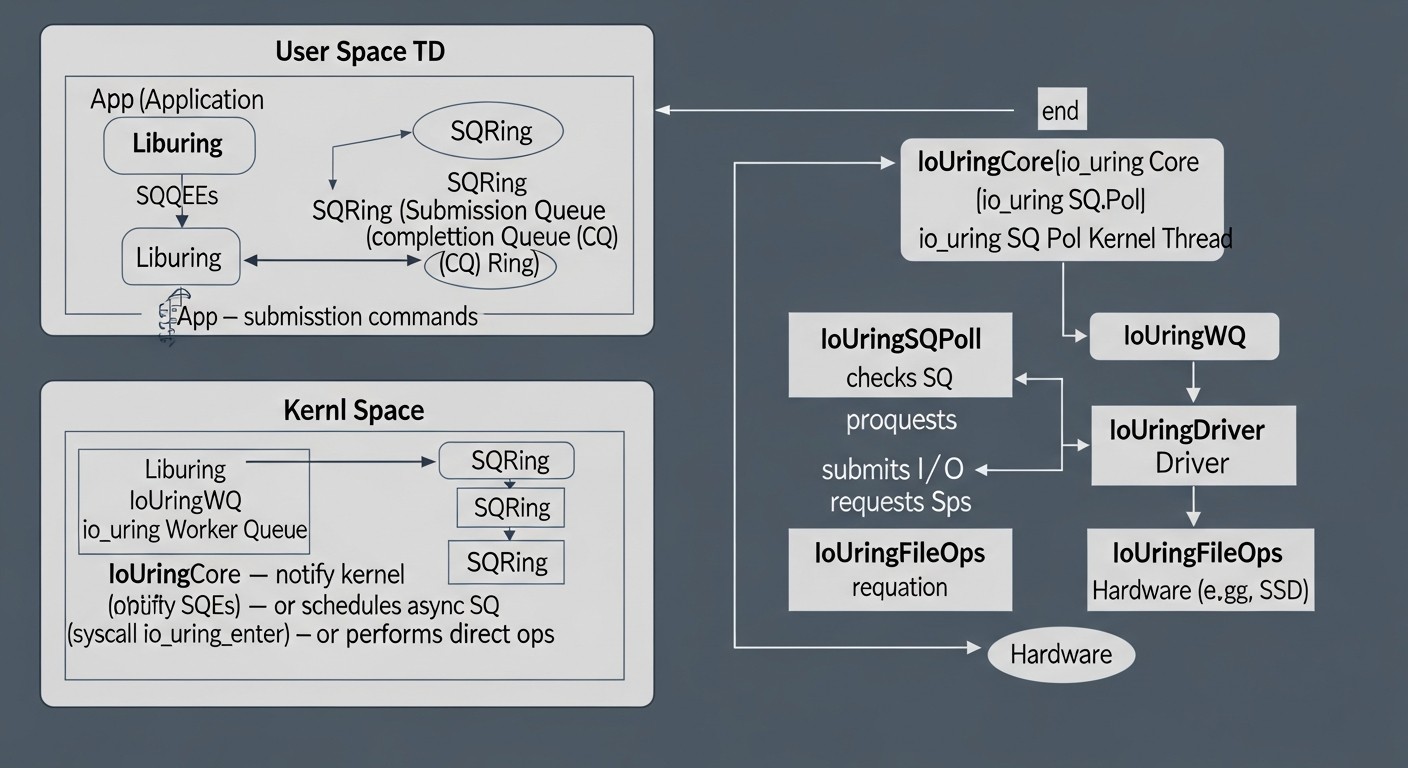

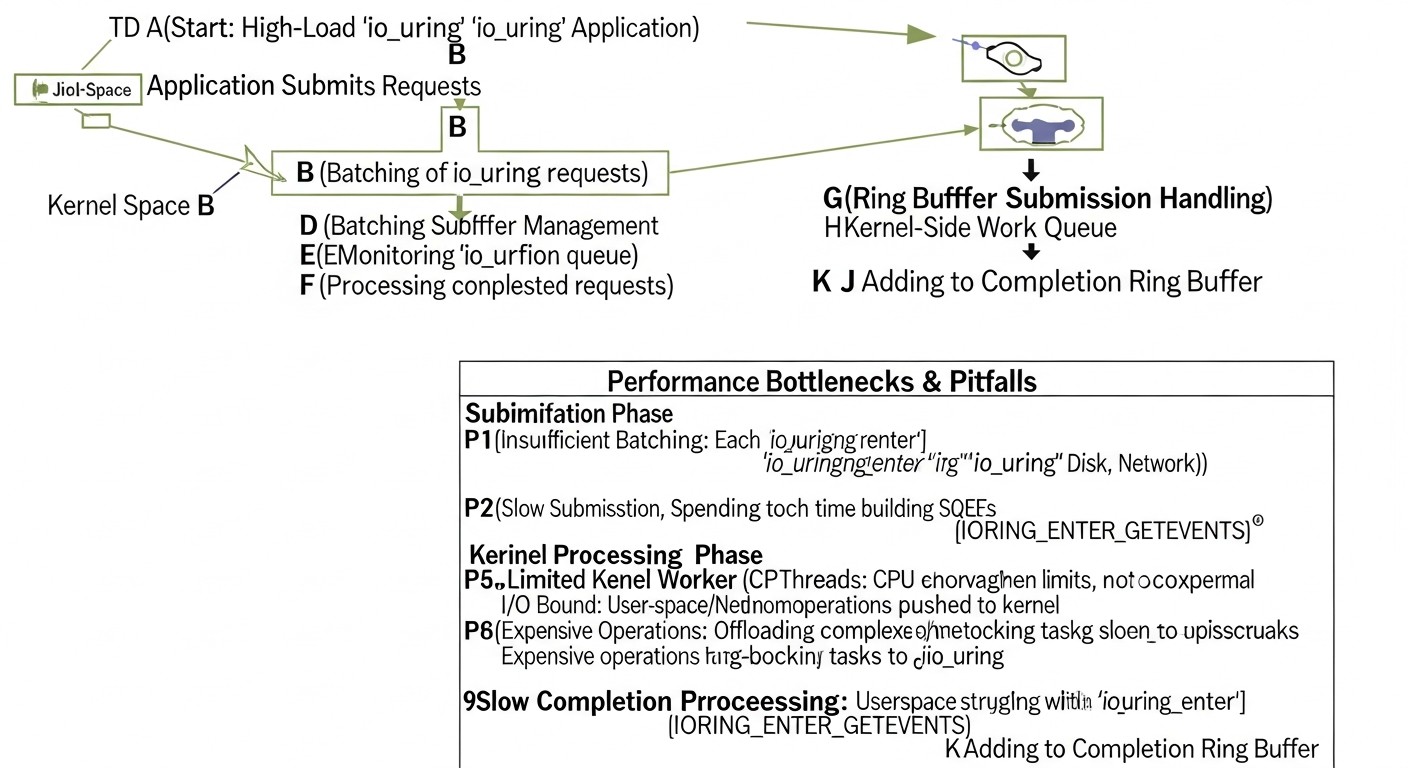

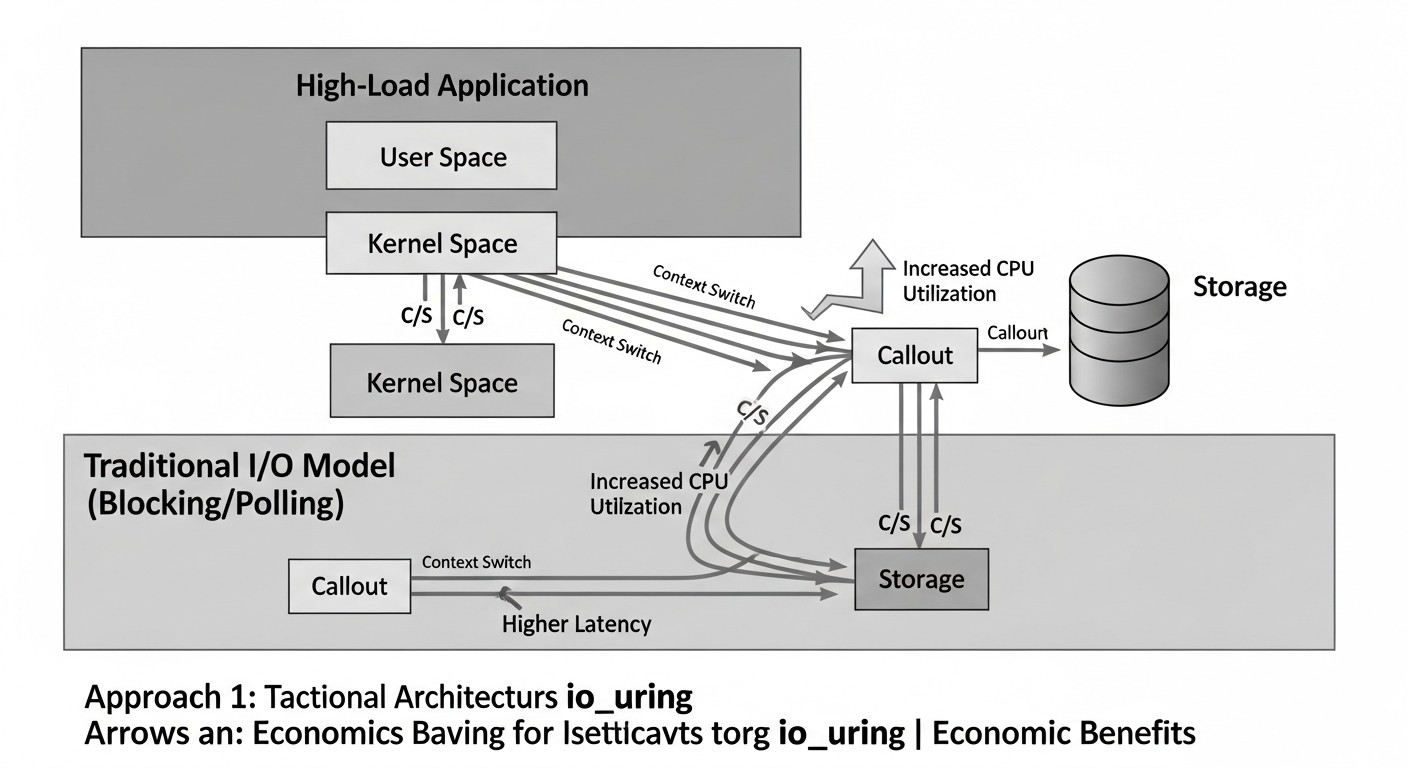

Diagram: 1. Introduction

Diagram: 1. Introduction

In an era of exponential data growth, widespread microservice architecture, and real-time transaction processing requirements, I/O subsystem performance has become a critical success factor for any high-load application. By 2026, this trend will only intensify, as users expect instant responses, and business processes demand the processing of petabytes of information with minimal latency.

Traditional approaches to I/O management in Linux, such as blocking calls, `select()`/`poll()`/`epoll()` for network operations, and `libaio` for disk operations, are showing their limitations. They suffer from high overhead due to system calls (syscalls), frequent context switches between user space and the kernel, and API fragmentation for different I/O types. These drawbacks lead to inefficient CPU resource utilization, increased latency, and limited scalability.

This is where io_uring comes into play — a revolutionary asynchronous I/O interface for the Linux kernel, introduced in kernel version 5.1. io_uring aims to solve fundamental I/O performance issues by unifying file, disk, and network operations under a single, highly efficient API. It allows applications to perform thousands of I/O operations without a single system call after initialization, significantly reducing overhead and freeing up the CPU for useful work.

This article is intended for a wide range of technical specialists facing the challenges of high-load systems: DevOps engineers striving to optimize infrastructure; Backend developers (especially in Python, Node.js, Go, PHP) working on the performance of their services; SaaS project founders for whom economic efficiency and scalability are important; System administrators responsible for server stability and speed; and Technical Directors of startups making architectural decisions. We will delve into the details of io_uring, examine its advantages, disadvantages, practical application aspects, and economic feasibility to help you make informed decisions in the world of high-performance systems in 2026.

2. Key I/O Performance Criteria and Factors

For effective I/O performance optimization, it is crucial to clearly understand which metrics and factors are key. A one-sided approach can lead to incorrect conclusions and inefficient solutions. In 2026, as hardware capabilities continue to grow and data processing speed requirements become stricter, a detailed analysis of these criteria becomes even more important.

2.1. Latency

What it is: The time required to complete a single I/O operation, from the moment of request to the moment of result reception. Measured in milliseconds (ms), microseconds (µs), or even nanoseconds (ns) for critically important systems. We distinguish between average latency and percentile latencies (P90, P99, P99.9), which show how long an operation takes for 90%, 99%, or 99.9% of requests, respectively. High values at P99 or P99.9 indicate "tail" latencies, which can significantly degrade user experience.

Why it's important: Directly affects application response speed. For interactive systems (web servers, databases, game servers), low latency is critical for ensuring a smooth user experience. High latencies can lead to timeouts, reduced customer satisfaction, and loss of revenue.

How to evaluate: Use utilities like `fio`, `iostat`, as well as specialized application and infrastructure monitoring tools that collect latency metrics. It is important to measure latency both at the operating system level and at the application level itself.

2.2. Throughput

What it is: The volume of data or number of operations that a system can process per unit of time. For disk operations, this can be MB/s or GB/s; for network operations, packets/s or Gbit/s; for databases, IOPS (input/output operations per second) or transactions/s. IOPS is especially important for databases with a large number of small, random operations.

Why it's important: Determines the overall performance of the system when processing large volumes of data. High throughput allows processing more requests, saving log files, streaming video, or performing analytical tasks faster.

How to evaluate: Similar to latency, using `fio`, `iostat`, `sar`, as well as network analyzers and database performance monitoring. It is important to distinguish between sequential and random throughput, as well as read and write throughput.

2.3. CPU Utilization

What it is: The proportion of processor time used to perform I/O operations and related system calls, context switches, and interrupt handling. High CPU utilization for I/O indicates inefficiency.

Why it's important: Every CPU cycle spent on I/O processing cannot be used to execute useful business logic. Reducing CPU utilization for I/O allows the application to do more work on the same hardware, reducing the need for scaling and, consequently, costs.

How to evaluate: Use `top`, `htop`, `vmstat`, `perf`, `bpftrace` for detailed CPU profile analysis. Pay attention to `%sy` (system time) and `%wa` (I/O wait time).

2.4. Concurrency

What it is: The number of I/O operations that can be performed simultaneously. Modern applications often handle thousands or millions of concurrent requests.

Why it's important: Directly affects the system's ability to serve multiple clients or internal processes without performance degradation. Traditional blocking I/O models scale poorly with concurrency, requiring a large number of threads or processes, which increases overhead.

How to evaluate: Load testing with an increasing number of concurrent clients/requests. Monitoring the number of active connections, threads, or pending requests.

2.5. Scalability

What it is: The ability of a system to effectively increase its performance by adding additional resources (CPU, RAM, disks, network adapters) or by increasing the load. Ideally, performance grows linearly with resources.

Why it's important: Allows the application to grow with business needs, avoiding costly architectural redesigns or performance limits. Good scalability reduces risks and provides flexibility.

How to evaluate: Conducting load testing on various hardware configurations, analyzing performance as the number of nodes in a cluster or resources on a single node increases.

2.6. Resource Efficiency

What it is: How efficiently the system uses available CPU, RAM, network, and disk bandwidth. Fewer resources for the same work means higher efficiency.

Why it's important: Directly impacts operational expenses (OpEx), especially in cloud environments where every gigabyte of RAM and every CPU hour is paid for. I/O optimization can lead to significant savings.

How to evaluate: Comprehensive monitoring of all system resources, correlating their utilization with achieved throughput and latency.

2.7. Jitter

What it is: Variability or fluctuations in I/O operation latency. Even with low average latency, high jitter (large spreads in P99.9 compared to P50) can create problems.

Why it's important: Unpredictable latencies can be worse than consistently high latency. It complicates planning, leads to unpredictable timeouts, and can cause cascading failures in distributed systems.

How to evaluate: Analysis of latency percentiles (P90, P99, P99.9), building histograms of latency distribution.

Understanding these criteria allows not only diagnosing performance problems but also choosing the most suitable technologies and approaches, such as io_uring, to achieve optimal results in specific scenarios.

3. Comparative Table of I/O Methods

Diagram: 3. Comparative Table of I/O Methods

Diagram: 3. Comparative Table of I/O Methods

In the world of Linux, there are many approaches to I/O management, each with its own strengths and weaknesses. io_uring is not a universal solution for all tasks, but in certain scenarios, it demonstrates unprecedented efficiency. In this table, we will compare key I/O methods relevant for 2026 to provide an overview of their applicability and performance.

| Criterion |

Blocking I/O (read()/write()) |

Multiplexing (epoll/select) |

Linux Native AIO (libaio) |

io_uring (with SQPOLL) |

io_uring (without SQPOLL) |

| Year of Introduction / Relevance |

1970s / Still fundamental |

1990s (select), 2002 (epoll) / Widely used |

2002 / Highly specialized |

2019 (Linux 5.1) / Standard for high-perf (2026) |

2019 (Linux 5.1) / Standard for high-perf (2026) |

| API Complexity |

Very low |

Medium |

High |

Very high |

High |

| Overhead (syscalls per operation) |

1-2 (wait + read/write) |

1 (epoll_wait) + N (read/write) |

2 (io_submit, io_getevents) |

~0 (after initialization) |

1 (io_uring_enter) per batch |

| Network Operation Support |

Yes |

Yes (primary use) |

No |

Full (accept, connect, recvmsg, sendmsg) |

Full (accept, connect, recvmsg, sendmsg) |

| Disk Operation Support |

Yes |

No (FD readiness only) |

Yes (O_DIRECT only) |

Full (including cached I/O) |

Full (including cached I/O) |

| File Operation Support (general) |

Yes |

No |

Limited (open, close) |

Full (openat, close, statx, fadvise) |

Full (openat, close, statx, fadvise) |

| Asynchronicity |

No (blocking) |

Pseudo-asynchronous (I/O readiness) |

Yes (true asynchronous) |

Yes (true asynchronous, kernel-side polling) |

Yes (true asynchronous, user-side polling) |

| Recommended Scenarios |

Simple scripts, low-load applications |

High-load network applications (web servers, proxies) |

Databases, specialized storage (O_DIRECT) |

Extremely high-load applications (databases, caches, message brokers, proxies) |

High-load applications where SQPOLL is not critical or undesirable |

| Typical Latency (ns) under High Load (P99) |

20000+ |

5000-10000 |

3000-7000 |

500-1500 |

1000-3000 |

| Max QPS (IOPS) per 1 core (K) (approx.) |

10-20 |

50-150 |

80-200 |

500-1500+ |

200-800 |

| CPU Utilization at 100K IOPS (%) |

~50-70 |

~30-40 |

~20-30 |

~5-15 |

~15-25 |

| Development Cost (relative units, 2026) |

1 |

2-3 |

4-5 |

8-10 |

6-8 |

Note: The figures provided are approximate estimates for high-load scenarios on modern hardware (Intel Xeon/AMD EPYC processors from 2024-2026, NVMe SSDs, 100GbE networks) and may vary significantly depending on the specific application, I/O type, block size, and system configuration. "Development Cost" reflects the relative complexity of learning and integration.

4. Detailed Overview of Each I/O Item/Option

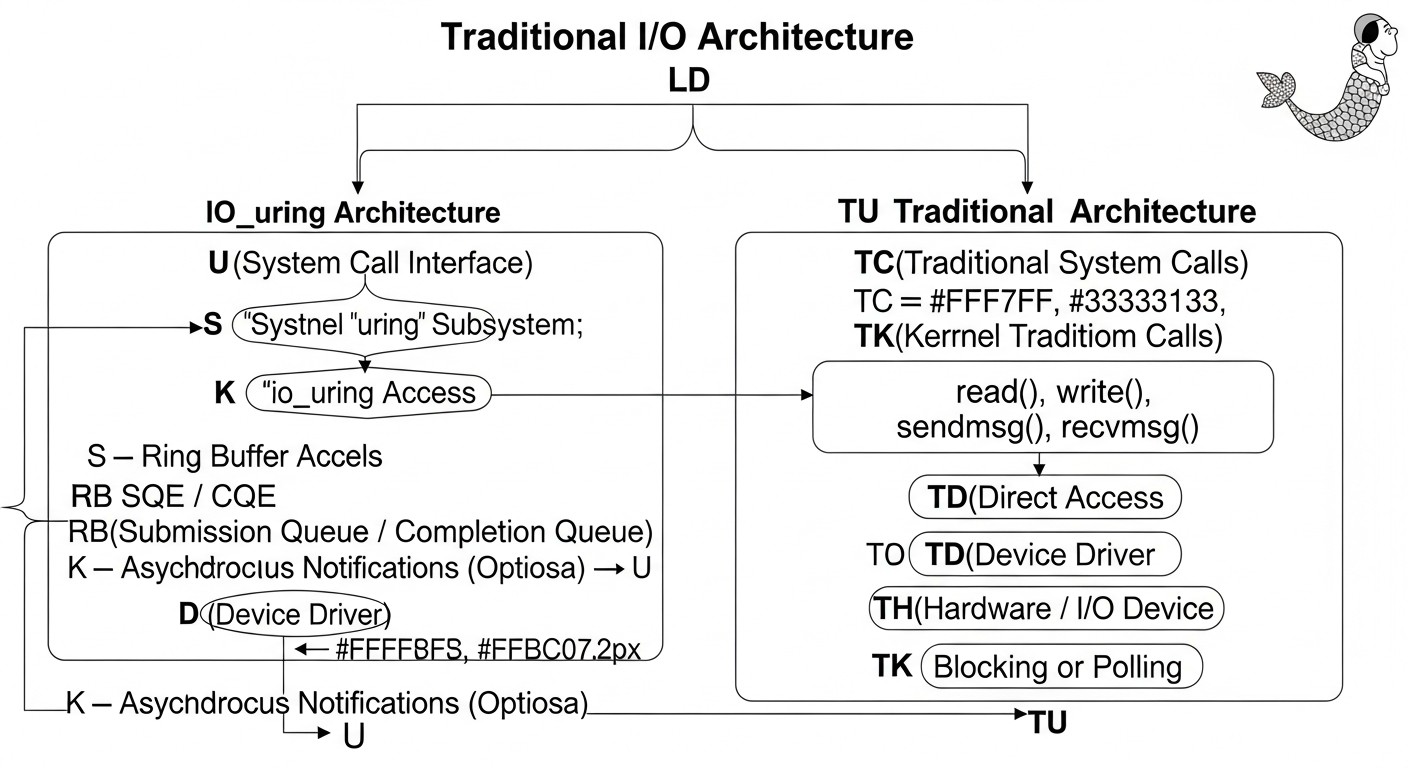

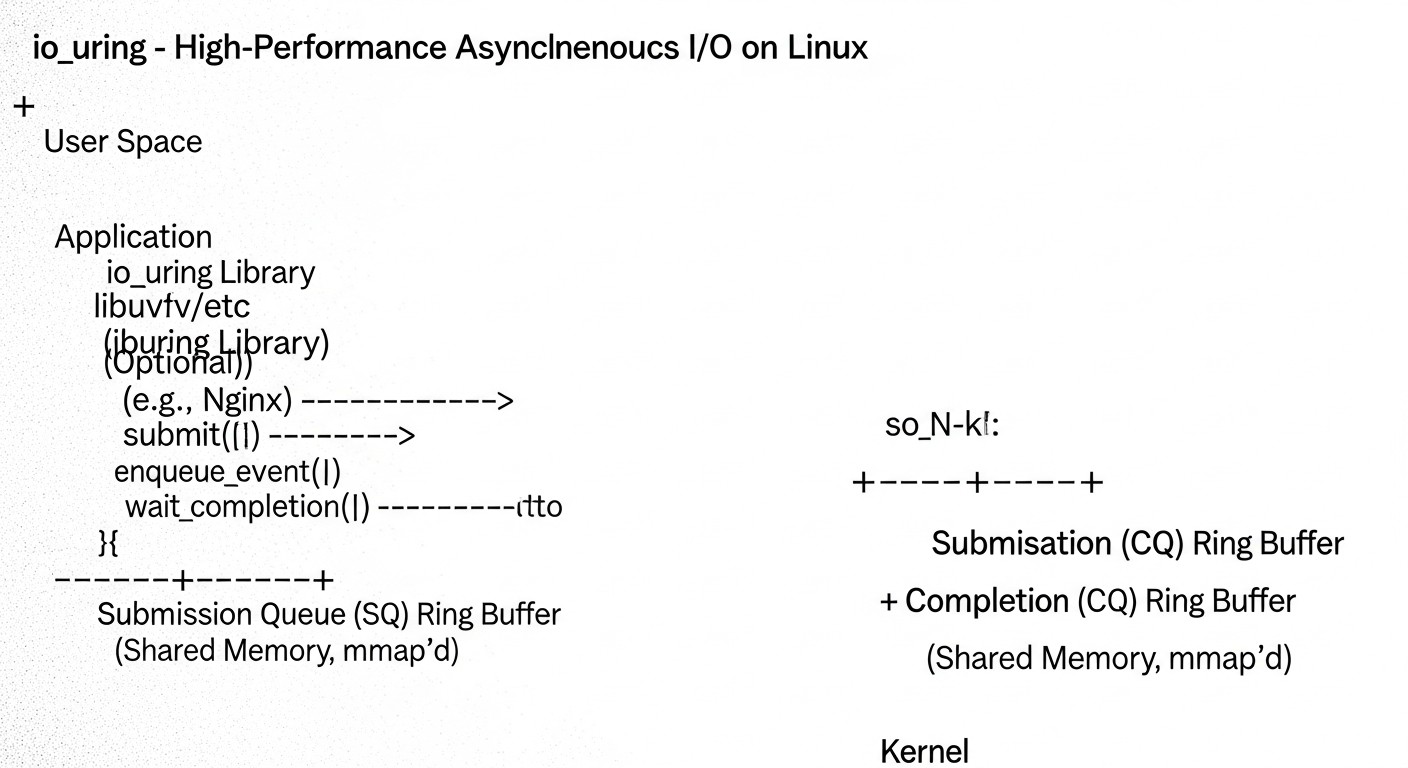

Diagram: 4. Detailed Overview of Each I/O Item/Option

Diagram: 4. Detailed Overview of Each I/O Item/Option

Let's delve into the specifics of each I/O method to understand when and why they are used, and why io_uring is becoming the preferred choice for high-performance systems.

4.1. Blocking I/O (read()/write())

This is the simplest and most intuitive way to perform input/output operations. When an application calls read() or write(), it blocks until the operation is completed by the kernel. During this time, the thread that initiated the I/O enters a waiting state, and the kernel scheduler can switch to another thread or process. The simplicity of the API makes it ideal for simple applications and scripts where I/O performance is not a bottleneck. However, for high-load applications, this model leads to serious scalability issues. Each I/O operation requires a system call, context switch, and waiting, which incurs huge overhead with a large number of parallel requests. To handle parallelism, many threads or processes must be launched, each of which can be blocked, leading to CPU "stalling" on context switches and inefficient resource utilization. In 2026, this method remains basic but is absolutely unsuitable for systems that need to process tens and hundreds of thousands of I/O operations per second.

4.2. I/O Multiplexing (select(), poll(), epoll())

These mechanisms were developed to address the blocking I/O problem in network applications. Instead of blocking on each read/write operation, an application can "ask" the kernel which of many open file descriptors (sockets, pipes) are ready for reading or writing. select() and poll() suffer from scaling issues with the number of file descriptors (O(N) list scanning), whereas epoll() (introduced in Linux 2.5.44) is significantly more efficient, operating in O(1) mode by using kernel callbacks and event ring buffers. epoll allows an application to efficiently handle thousands and millions of concurrent network connections with minimal overhead. It is the cornerstone of most modern web servers, proxies, and message brokers. However, epoll is not truly asynchronous I/O for disk operations; it only notifies when a file descriptor is ready. The read()/write() operations themselves, after receiving an event, can still block if data is not yet ready or the buffer is full, or if the operation is performed with a disk rather than a network. This limits its application in scenarios with intensive disk I/O, such as databases.

4.3. Linux Native AIO (libaio)

libaio (or Linux Native AIO) was developed specifically to address asynchronous disk I/O problems, especially for databases that require direct disk access (O_DIRECT) without kernel page cache intervention. It allows an application to submit a batch of I/O requests to the kernel and then asynchronously receive notifications of their completion. This eliminates blocking of the calling thread and allows efficient use of high-performance storage (e.g., NVMe SSDs). The libaio API is quite complex and low-level, requiring manual management of iocb (I/O control blocks) and io_event. The main limitations of libaio are its exclusive focus on disk operations (and only with O_DIRECT for asynchronicity) and lack of network I/O support. Furthermore, even with libaio, each batch of operations requires io_submit() and io_getevents() system calls, which, although better than one call at a time, still creates overhead that io_uring aims to eliminate. By 2026, libaio has largely been superseded by io_uring in new high-performance projects, although it is still present in older, time-tested systems.

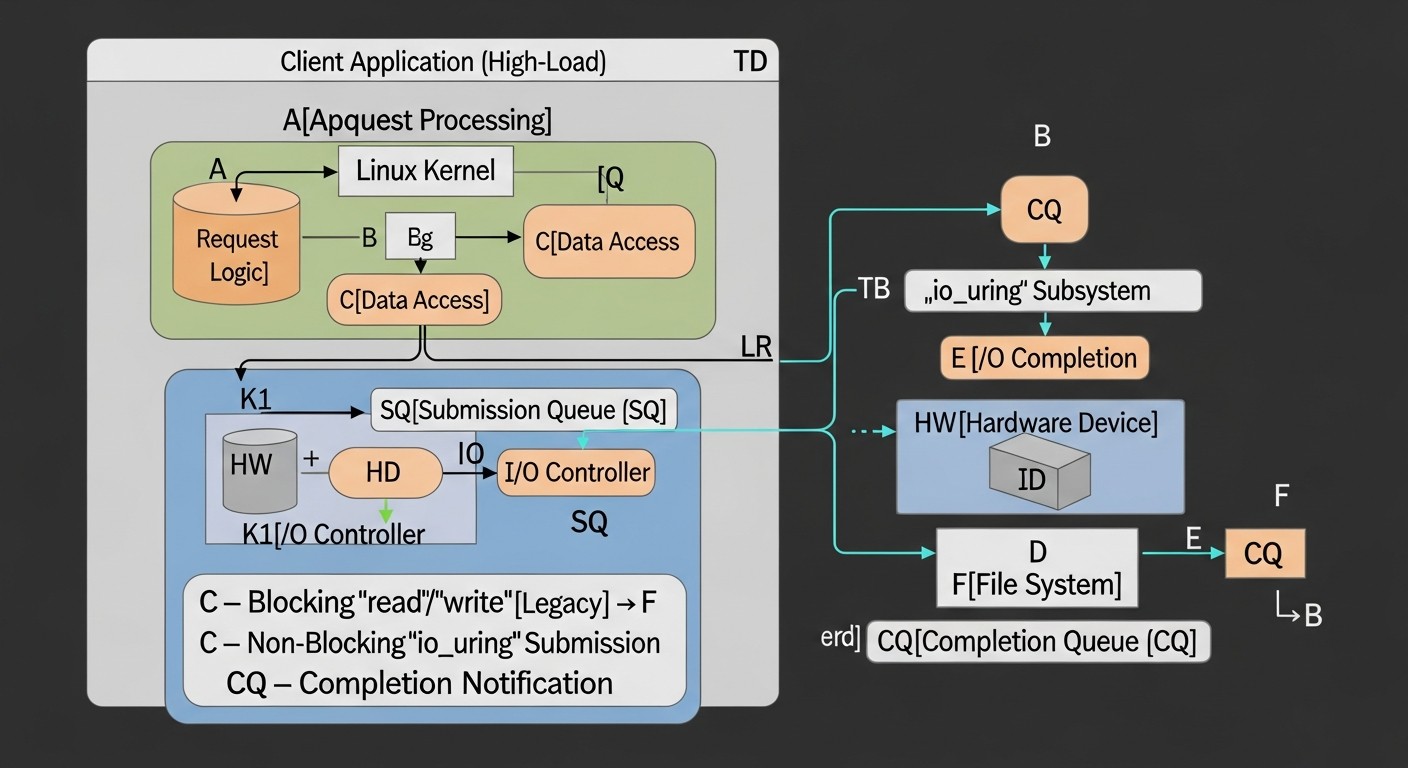

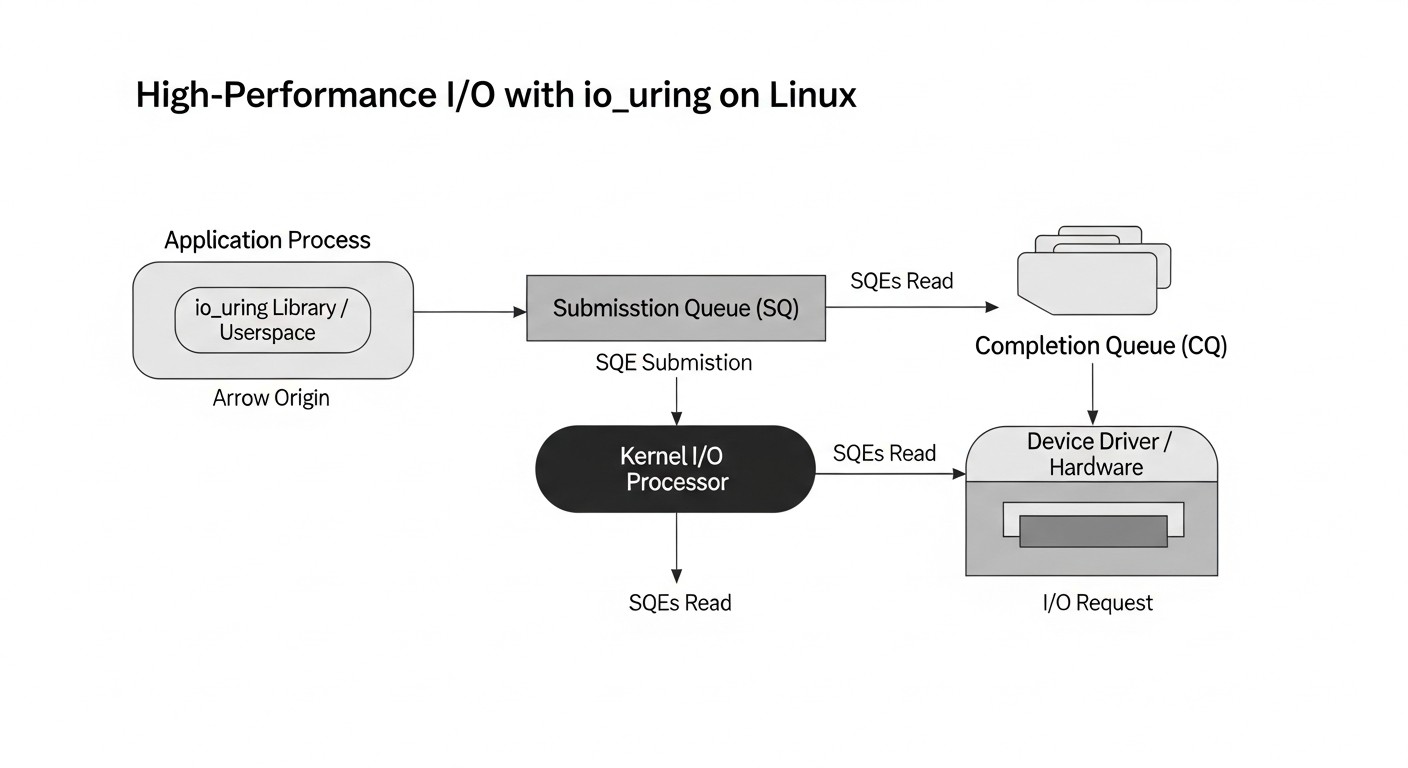

4.4. io_uring: Unified Asynchronous I/O for Linux

io_uring is the culmination of decades of I/O development in Linux. Its main goal is to provide a unified, highly efficient, asynchronous interface for all types of I/O operations: disk, file, network, as well as a number of other system operations. Unlike previous methods, io_uring operates on a "fire and forget" principle with minimal kernel interaction after initial setup.

io_uring Architecture:

- Ring Buffers: At the heart of io_uring are two ring buffers mapped into user-space memory:

- Submission Queue (SQ): The request queue. The application places operation descriptors (Submission Queue Entries, SKE) here, describing what needs to be done (e.g., read a file, send data over a socket).

- Completion Queue (CQ): The completion queue. The kernel places the results of completed operations (Completion Queue Entries, CQE) here, notifying the application of the status and outcome of each operation.

- Minimal System Calls: After initializing io_uring, the application can submit thousands of operations by simply writing them to the SQ. The kernel picks them up from the SQ and executes them asynchronously. When operations complete, results are placed in the CQ. The application periodically checks the CQ for completed operations. The

io_uring_enter() system call is only required to "wake up" the kernel if it's idle, or to submit a large batch of requests if the SQ is full. This radically reduces system call overhead.

- Unification: io_uring supports a wide range of operations, including:

- Disk/File I/O:

read, write, fsync, openat, close, statx, fadvise. Supports both cached and direct I/O (O_DIRECT).

- Network I/O:

accept, connect, recvmsg, sendmsg. This allows for the creation of high-performance network servers that previously relied on epoll.

- Other Operations:

link_timeout (asynchronous timers), async_cancel (operation cancellation), io_uring_cmd (extensible commands for drivers).

- SQPOLL (Submission Queue Polling): This is an optional mode where a separate kernel thread continuously polls the SQ for new requests. This completely eliminates the need for the

io_uring_enter() system call to submit requests, reducing overhead to an absolute minimum. It is ideal for extremely high loads but consumes one CPU core for the polling thread.

- IORING_SETUP_IOPOLL: This is a mode where the kernel does not generate interrupts upon I/O completion, but the user application itself polls the CQ for completed operations. It requires an active polling loop in user space. Used in scenarios with very low latencies where even interrupts are too expensive.

- Resource Registration: io_uring allows pre-registration of memory buffers and file descriptors with the kernel. This eliminates the need for expensive

mmap/munmap system calls or descriptor checks for each operation, further reducing overhead and allowing the kernel to perform operations more efficiently (e.g., by avoiding data copying between user and kernel space).

Advantages: io_uring significantly reduces latency, increases throughput, and decreases CPU utilization for I/O-intensive tasks. It allows processing millions of IOPS on a single CPU core, which was unthinkable with previous methods. This is achieved by minimizing system calls, context switches, and using the kernel to perform asynchronous operations.

Disadvantages: Complex and low-level API, requiring a deep understanding of kernel operation and careful memory management. High entry barrier for developers. Errors in working with io_uring can lead to difficult-to-diagnose problems.

Who it's for: io_uring is an ideal solution for developers of high-performance databases, key-value stores, message brokers, proxy servers, game servers, high-load web servers, and any other applications where I/O is a bottleneck and maximum efficiency in hardware resource utilization is required. In 2026, it is becoming the standard for such systems.

5. Practical Tips and Recommendations for io_uring

Diagram: 5. Practical Tips and Recommendations for io_uring

Diagram: 5. Practical Tips and Recommendations for io_uring

Integrating io_uring into a high-load application is a non-trivial task that requires careful attention and deep understanding. Here are some practical tips and step-by-step recommendations based on real-world experience.

5.1. Choosing the Right Linux Kernel Version

For full utilization of io_uring, it is strongly recommended to use Linux kernel version 5.10 or newer. In versions 5.1-5.9, functionality was limited, there were bugs, and many important operations (e.g., network operations) were missing. The optimal choice for a production environment in 2026 is 6.x series kernels (e.g., 6.6 LTS or newer), which offer maximum stability, performance, and a full set of features, including extended support for file operations, timers, and request cancellation.

# Check kernel version

uname -r

# Expected output: 6.6.10-amd64 or newer

5.2. Using the liburing Library

While it's possible to work with io_uring directly through system calls, it is highly recommended to use the user-space library liburing. It provides a convenient and safe high-level API that abstracts away low-level details of working with ring buffers and system calls, significantly simplifying development and reducing the likelihood of errors.

# Install liburing (example for Debian/Ubuntu)

sudo apt update

sudo apt install liburing-dev

# For other distributions, use the corresponding package manager (dnf, pacman)

5.3. Initializing the io_uring Context

The first step is to create an io_uring instance. It is necessary to define the size of the ring buffers (number of SQE entries). The larger the size, the more operations can be queued without an io_uring_enter system call.

#include <liburing.h>

#include <stdio.h>

#include <stdlib.h>

#define QUEUE_DEPTH 4096 // Recommended queue depth for high-load systems

struct io_uring ring;

int main() {

struct io_uring_params p = {};

// Flags can be added, e.g., IORING_SETUP_SQPOLL

// p.flags |= IORING_SETUP_SQPOLL;

// p.sq_thread_cpu = 0; // Pin SQPOLL to a specific CPU core

int ret = io_uring_queue_init_params(QUEUE_DEPTH, &ring, &p);

if (ret < 0) {

fprintf(stderr, "io_uring_queue_init: %s\n", strerror(-ret));

return 1;

}

printf("io_uring initialized successfully with queue depth %d\n", QUEUE_DEPTH);

// ... your io_uring code ...

io_uring_queue_exit(&ring);

return 0;

}

5.4. Registering Buffers and File Descriptors

To achieve maximum performance, it is essential to register memory buffers and file descriptors. This allows the kernel to avoid costly checks and data copying, and to use optimizations such as zero-copy I/O in some scenarios.

// Example of buffer registration

char *buffer_pool[NUM_BUFFERS]; // Pre-allocated buffers

// ... buffer_pool initialization ...

int ret = io_uring_register_buffers(&ring, buffer_pool, NUM_BUFFERS, BUFFER_SIZE);

if (ret < 0) {

fprintf(stderr, "io_uring_register_buffers: %s\n", strerror(-ret));

// Error handling

}

// Example of file descriptor registration

int files[NUM_FILES]; // Array of open FDs

// ... files initialization ...

ret = io_uring_register_files(&ring, files, NUM_FILES);

if (ret < 0) {

fprintf(stderr, "io_uring_register_files: %s\n", strerror(-ret));

// Error handling

}

5.5. Batching Operations

One of the main principles of io_uring is to submit the maximum possible number of operations in a single system call. Instead of calling io_uring_submit() after each operation, accumulate SQEs in the queue and submit them in a batch. This sharply reduces the number of context switches.

// Example of batching read operations

for (int i = 0; i < batch_size; ++i) {

struct io_uring_sqe *sqe = io_uring_get_sqe(&ring);

if (!sqe) {

// SQ queue is full, submit current operations and try again

io_uring_submit(&ring);

sqe = io_uring_get_sqe(&ring); // Retry

if (!sqe) {

fprintf(stderr, "Failed to get SQE even after submit\n");

break;

}

}

io_uring_prep_read(sqe, file_descriptors[i], buffers[i], BUFFER_SIZE, offsets[i]);

io_uring_sqe_set_data(sqe, (void*)(long)request_id[i]); // User data for identification

}

io_uring_submit(&ring); // Submit remaining operations

5.6. Handling Completed Operations

After submitting requests, it is necessary to periodically check the Completion Queue for completed operations. This can be done in both blocking (io_uring_wait_cqe) and non-blocking (io_uring_peek_cqe, io_uring_for_each_cqe) ways.

struct io_uring_cqe *cqe;

unsigned head;

unsigned count = 0;

// Non-blocking CQ polling

io_uring_for_each_cqe(&ring, head, cqe) {

long request_id = (long)io_uring_cqe_get_data(cqe);

int res = cqe->res; // Operation result (number of bytes or error code)

if (res < 0) {

fprintf(stderr, "I/O error for request %ld: %s\n", request_id, strerror(-res));

} else {

printf("Request %ld completed successfully, bytes: %d\n", request_id, res);

}

count++;

}

io_uring_cq_advance(&ring, count); // Mark processed CQEs

5.7. Using IORING_SETUP_SQPOLL

For the most extreme loads, consider using IORING_SETUP_SQPOLL. This flag, when initializing io_uring, creates a kernel thread that constantly polls the SQ. This eliminates the need for the io_uring_enter() system call to submit requests but consumes one CPU core. Pinning this thread to a specific core (sq_thread_cpu) can improve caching.

struct io_uring_params p = {};

p.flags |= IORING_SETUP_SQPOLL;

p.sq_thread_cpu = 0; // Pin to CPU 0

int ret = io_uring_queue_init_params(QUEUE_DEPTH, &ring, &p);

// ...

5.8. Asynchronous Network Operations

io_uring fully supports asynchronous network operations, replacing epoll in high-performance network servers.

// Example of asynchronous accept

struct io_uring_sqe *sqe = io_uring_get_sqe(&ring);

io_uring_prep_accept(sqe, listen_fd, (struct sockaddr *)&client_addr, &client_addr_len, 0);

io_uring_sqe_set_data(sqe, (void*)(long)ACCEPT_REQ_ID);

io_uring_submit(&ring);

// Example of asynchronous recv

sqe = io_uring_get_sqe(&ring);

io_uring_prep_recv(sqe, client_fd, buffer, BUFFER_SIZE, 0);

io_uring_sqe_set_data(sqe, (void*)(long)RECV_REQ_ID);

io_uring_submit(&ring);

5.9. Buffer Lifecycle Management

When using registered buffers, ensure they remain valid until the kernel completes all operations with them. Using "user_data" in SQE to pass pointers to request structures or identifiers helps correctly manage buffers and resources after an operation completes.

5.10. Thorough Testing and Benchmarking

After implementing io_uring, it is essential to conduct load testing using fio (with the io_uring engine), wrk, ab, or specialized benchmarks. Compare performance with previous implementations, analyze metrics for latency, throughput, and CPU utilization. io_uring does not always provide a benefit for all types of workloads, so it's important to ensure it truly solves your problem.

6. Typical Errors When Working with io_uring

Diagram: 6. Typical Errors When Working with io_uring

Diagram: 6. Typical Errors When Working with io_uring

io_uring is a powerful, yet complex tool. Errors in its use can lead to unpredictable behavior, memory leaks, or, ironically, to performance degradation. Here are the most common mistakes developers encounter:

6.1. Ignoring the Linux Kernel Version

Error: Attempting to use io_uring on older kernels (pre-5.10) or expecting full functionality on early versions (5.1-5.9).

Consequences: Lack of support for necessary operations (e.g., network operations), presence of bugs, instability, inability to use key optimizations. The application may compile but crash at runtime or operate incorrectly.

How to avoid: Always check the kernel version using uname -r. For serious projects in 2026, use 6.x LTS kernels. Include kernel version checks at application startup or in deployment scripts.

6.2. Lack of Operation Batching

Error: Sending each I/O request to the kernel via io_uring_submit() (or io_uring_enter()) individually, instead of combining them into batches.

Consequences: Performance degradation, as each io_uring_submit() call is a system call that involves a context switch. This negates one of io_uring's main advantages, making it not much better than traditional AIO or even epoll for a large number of small operations.

How to avoid: Batch requests as much as possible. Accumulate SQEs in the Submission Queue up to a certain threshold (e.g., 64, 128, 256 operations) or until a short timeout expires, before calling io_uring_submit(). Use the IOSQE_IO_DRAIN flag for operations that must complete before subsequent ones, but this is rarely needed.

6.3. Incorrect Management of Buffer and File Descriptor Lifecycles

Error: Freeing memory buffers or closing file descriptors before the kernel has completed all operations with them. Or conversely, not freeing resources after their use.

Consequences: Memory leaks, "use-after-free" errors, application crashes, data corruption. The kernel works with pointers to your buffers; if they become invalid, undefined behavior occurs.

How to avoid:

- Use

user_data in the SQE to associate the request with context (e.g., a pointer to a request structure including buffers).

- Free or reuse buffers only after receiving the corresponding CQE (Completion Queue Entry) indicating operation completion.

- Register buffers and file descriptors using

io_uring_register_buffers() and io_uring_register_files(). This helps the kernel manage resources more safely and reduces overhead.

6.4. Incorrect Handling of Completed Operations

Error: Incorrectly reading the Completion Queue (CQ) or ignoring error codes in CQE.

Consequences: Application freezes (if the CQ overflows and new operations cannot be completed), data loss, incorrect application state, missed I/O errors.

How to avoid:

- Always check

cqe->res for errors (negative values). Use strerror(-cqe->res) to get a textual description of the error.

- Regularly poll the CQ and advance the

head pointer using io_uring_cq_advance() after processing CQEs.

- Use

io_uring_wait_cqe() with caution to avoid blocking the main processing loop for too long if there are other tasks.

6.5. Incorrect Use or Ignoring IORING_SETUP_SQPOLL

Error: Enabling IORING_SETUP_SQPOLL without understanding its consequences (kernel CPU consumption) or not using it in scenarios where it could provide a significant performance boost.

Consequences: If SQPOLL is enabled unnecessarily, you waste CPU core resources. If it's not used where needed, the application suffers from superfluous io_uring_enter() system calls.

How to avoid: SQPOLL is intended for extreme workloads where every system call is critical. Apply it only after thorough benchmarking. Ensure you bind the SQPOLL thread to a specific CPU (p.sq_thread_cpu) to avoid caching and isolation issues.

6.6. Lack of Operation Cancellation Handling

Error: Failure to cancel stuck or irrelevant I/O operations, especially in long-lived network connections or when working with slow disks.

Consequences: Resource hangs, timeouts, kernel memory and CPU consumption for operations that are no longer needed by the application.

How to avoid: Use IORING_OP_ASYNC_CANCEL. Store user_data for each request so that a specific operation can be identified and canceled. This is especially important for network operations with timeouts or when closing client connections.

6.7. Insufficient Logging and Monitoring

Error: Lack of detailed logging for io_uring errors and performance metrics.

Consequences: Difficulties in diagnosing problems in a production environment, inability to identify bottlenecks, suboptimal system performance.

How to avoid: Log all errors returned by io_uring. Monitor the number of SQEs, CQEs, operation latencies, the number of io_uring_enter() system calls, and CPU utilization (especially %sy and %wa) using tools such as bpftrace, perf, iostat. Use /proc/sys/fs/io_uring/ to get statistics on current io_uring contexts.

7. Checklist for Practical Application of io_uring

This checklist will help you systematize the process of implementing and optimizing io_uring in your application, minimizing risks and maximizing performance.

7.1. Preparation and Planning

- Define the application's I/O profile:

- What type of I/O dominates: disk (random/sequential, small/large blocks), network (many connections/low traffic, few connections/high traffic)?

- What are the current metrics: latency (P99, P99.9), throughput (IOPS/MB/s), CPU utilization?

- What current I/O methods are being used?

- Check Linux kernel version: Ensure kernel 5.10+ is used (6.x LTS recommended). If not, plan an upgrade.

- Assess integration complexity: io_uring requires significant development effort. Evaluate whether this effort is justified by the potential performance gain.

7.2. io_uring Initialization

- Use

liburing: Include the liburing library in your project to simplify working with the API.

- Initialize io_uring context:

- Determine the optimal queue depth (

QUEUE_DEPTH) for SQ and CQ, based on expected parallelism.

- Select initialization flags:

IORING_SETUP_SQPOLL: If extremely low latency is required and you are willing to dedicate a CPU core. Bind the SQPOLL thread to a specific core (p.sq_thread_cpu).IORING_SETUP_IOPOLL: For devices that support kernel-side polling (e.g., NVMe).IORING_SETUP_SQ_AFF: Bind SQ to a specific core.

- Register resources:

- Register memory buffers (

io_uring_register_buffers) if you will be using fixed buffers.

- Register file descriptors (

io_uring_register_files) if you are working with a persistent set of files/sockets.

- Remember to properly manage the lifecycle of registered resources.

7.3. I/O Logic Implementation

- Implement operation batching:

- Accumulate SQEs in the Submission Queue.

- Call

io_uring_submit() only when the SQ is full or a short timeout has expired.

- Design request structure: Use

io_uring_sqe_set_data() to bind user data (pointers to buffers, request IDs, callbacks) to each SQE.

- Implement completion handling:

- Periodically poll the Completion Queue.

- Use

io_uring_for_each_cqe() for efficient processing of all completed events.

- Always check

cqe->res for errors and log them.

- After processing all CQEs, advance the

head pointer using io_uring_cq_advance().

- Handle operation cancellation: Implement a mechanism to cancel stuck or irrelevant requests using

IORING_OP_ASYNC_CANCEL.

7.4. Testing, Monitoring, and Optimization

- Conduct benchmarking:

- Use

fio (with io_uring engine) for disk operations.

- Use

wrk, ab, or custom benchmarks for network operations.

- Compare performance with previous implementation and with other I/O methods.

- Configure monitoring:

- Track I/O metrics (IOPS, throughput, latency) using

iostat, vmstat.

- Monitor CPU utilization (

top, htop), especially system time and I/O wait time.

- Use

bpftrace or perf for in-depth analysis of io_uring behavior in the kernel.

- Isolate CPU: For critically important I/O threads, consider CPU isolation using kernel parameters (

isolcpus, nohz_full) and binding processes/threads to specific cores (taskset).

- Fine-tuning: Experiment with ring buffer sizes, batch counts, initialization flags, and buffer registration settings to achieve optimal performance for your specific scenario.

8. Cost Calculation / Economics of io_uring Usage

Diagram: 8. Cost Calculation / Economics of io_uring Usage

Diagram: 8. Cost Calculation / Economics of io_uring Usage

Implementing io_uring is an investment that pays off not only in terms of performance gains but also in significant operational expenditure (OpEx) savings and increased business value. By 2026, as cloud services continue to become more expensive and efficiency requirements grow, the economic aspect of io_uring becomes increasingly evident.

8.1. Reduced Hardware Resource Requirements

The main economic advantage of io_uring lies in its ability to perform a much larger volume of I/O operations with fewer CPU resources. This means that for the same workload, you will need:

- Fewer CPU Cores: io_uring significantly reduces system calls and context switches, freeing up the CPU for useful application work. This allows the same load to be handled on servers with fewer cores or on fewer instances in the cloud.

- Less RAM: Although io_uring itself may require buffer allocation, overall efficiency can allow for a reduction in the amount of RAM needed to maintain a large number of threads or processes, each requiring its own stack and context.

- Fewer Servers/Instances: If one machine can handle 2-3 times more I/O, you can reduce the number of physical servers or cloud instances required for a cluster.

8.2. Cloud Cost Optimization

In cloud environments (AWS, GCP, Azure), where instance costs directly depend on the number of vCPUs and RAM, savings can be dramatic. For example, transitioning from instance types like m6a.xlarge to m6a.large or even m6a.medium for the same I/O-intensive task can reduce monthly bills by tens of percent. Furthermore, fewer instances mean lower costs for network traffic (ingress/egress), IP addresses, and management.

8.3. Increased Business Value

- Improved User Experience: Reduced I/O latencies directly lead to faster application response, which increases customer satisfaction, reduces churn, and contributes to business growth.

- Increased Throughput: The ability to process more requests or data per unit of time allows for expanding functionality, introducing new services, and supporting a larger volume of business without re-architecting the infrastructure.

- Competitive Advantage: Applications using io_uring can outperform competitors in terms of performance and cost, which is a critical factor in highly competitive SaaS and online service markets.

8.4. Hidden Costs

- High Development Cost: The io_uring API is complex and low-level. Developers will require significant time for learning and integration, which increases initial development costs. Highly qualified specialists are needed.

- Debugging Complexity: Errors in working with io_uring can be difficult to diagnose, increasing the time required to find and fix issues.

- Kernel Version Dependency: The requirement for a newer Linux kernel version can be a problem for companies with conservative update policies.

- CPU Consumption for SQPOLL: If

IORING_SETUP_SQPOLL is used, one CPU core will be constantly occupied by a kernel thread, which may be inefficient for low-load systems.

8.5. Table with Example Calculations for Different Scenarios (2026)

Let's assume we have a SaaS application that needs to process 1 million IOPS for its primary database or cache.

| Parameter |

Traditional I/O (epoll + blocking read/write) |

io_uring (without SQPOLL) |

io_uring (with SQPOLL) |

| Required Number of CPU Cores (for 1M IOPS) |

~16-24 cores |

~4-8 cores |

~2-4 cores (+1 core for SQPOLL) |

| Required RAM (for 1M IOPS) |

~64-128 GB |

~32-64 GB |

~32-64 GB |

| Instance Type (example, 2026) |

2 x m7g.8xlarge (16 vCPU, 64GB RAM) |

1 x m7g.4xlarge (8 vCPU, 32GB RAM) |

1 x m7g.2xlarge (4 vCPU, 16GB RAM) + 1 core for SQPOLL |

| Instance Cost (monthly, 2026, est.) |

~$2000 - $3000 |

~$500 - $800 |

~$250 - $400 |

| Power Consumption (kWh, monthly) |

~1000 kWh |

~300 kWh |

~150 kWh |

| Development Cost (arbitrary units, 2026) |

10 |

25 |

30 |

| Support Cost (arbitrary units, 2026) |

5 |

8 |

10 |

| Total TCO over 3 years (arbitrary units) |

~1000 |

~400 |

~300 |

Note: Arbitrary units and instance prices are hypothetical for 2026 and serve to illustrate relative savings. Actual figures will depend on the specific provider, region, discounts, and workload. TCO (Total Cost of Ownership) includes development, support, and 3 years of operation.

As can be seen from the table, despite higher initial development costs, io_uring provides significant savings in operational expenses in the long term, especially for high-load systems. This makes it a highly attractive investment for SaaS projects and other I/O-intensive applications.

9. Case Studies and Examples of io_uring Implementation

Diagram: 9. Case Studies and Examples of io_uring Implementation

Diagram: 9. Case Studies and Examples of io_uring Implementation

io_uring has already proven itself in real-world high-load projects. Here are a few realistic scenarios demonstrating its transformative potential.

9.1. Case 1: High-Performance Key-Value Database (Redis/Memcached Equivalent)

Problem: A startup was developing a low-latency distributed Key-Value database for gaming applications. Initially, epoll was used for network I/O and libaio for data persistence on NVMe SSDs (with O_DIRECT). Upon reaching 500,000 IOPS on a single node, CPU utilization reached 70-80%, and P99 write operation latency increased to 10-15 ms due to frequent system calls and context switches between network and disk I/O. Scaling required adding a large number of nodes, leading to high cloud costs.

Solution with io_uring: The team rewrote the I/O subsystem, fully migrating to io_uring. All network operations (accept, recvmsg, sendmsg) and disk operations (read, write, fsync) were unified under a single io_uring processing loop. The following optimizations were implemented:

- Usage of

IORING_SETUP_SQPOLL, bound to a dedicated CPU core.

- Registration of memory buffers to avoid data copying.

- Registration of file descriptors for open data files.

- Aggressive batching of operations (up to 256 SQEs per

io_uring_submit(), when necessary).

Results:

- Throughput: Increased to 1.5 million IOPS on a single node (300% gain).

- Latency (P99): Decreased from 10-15 ms to 1.5-3 ms for write operations.

- CPU Utilization: At 1 million IOPS, it decreased from 70-80% to 20-25% (including the core for SQPOLL).

- Savings: The number of required cloud instances was reduced by 3 times, leading to savings of over 60% on monthly infrastructure operational costs.

This case study showed how io_uring enabled the startup to achieve performance previously only available with very expensive hardware or complex specialized solutions.

9.2. Case 2: High-Load Proxy Server / Load Balancer

Problem: A large online service used NGINX as its primary proxy server and load balancer. During peak hours, when handling millions of active TCP connections and thousands of requests per second, NGINX (based on epoll) began to consume significant CPU resources, and proxying latencies increased. This required scaling by adding many proxy instances, which increased cost and management complexity.

Solution with io_uring: The team decided to develop a custom, lightweight proxy server in C++ using io_uring. The goal was not to completely replace NGINX, but to create a specialized component for the most critical data paths. Asynchronous accept, connect, recvmsg, sendmsg io_uring operations were used. The main focus was on minimizing data copying.

- Usage of registered buffers for incoming and outgoing data to avoid

memcpy between user space and kernel.

- Application of

IORING_OP_SPLICE for direct data copying between sockets without copying to user space (zero-copy).

- Optimization of the CQ processing loop for instant reaction to network events.

Results:

- Connection Handling: The custom proxy was able to handle 2-2.5 times more active TCP connections on a single CPU core compared to NGINX.

- Latency (P99): Average proxying latency decreased by 30-40% under high loads.

- CPU Utilization: For the same traffic volume, CPU utilization decreased by 50-60%.

- Savings: Allowed for a 40% reduction in the number of proxy instances, significantly decreasing cloud costs and simplifying the architecture.

This case study highlights the effectiveness of io_uring in network scenarios, especially when handling a huge number of connections and minimizing latencies is required.

9.3. Case 3: Logging and Metrics Collection System

Problem: A large monitoring and log collection system faced the challenge of writing huge volumes of data to disk. Log aggregation servers constantly operated under high I/O load, leading to high write latencies, buffer overflows, and loss of some logs during peak moments. Standard blocking write() calls with periodic fsync() were used.

Solution with io_uring: Developers redesigned the log writing component, using io_uring for all disk operations. They implemented a mechanism for asynchronous writing and asynchronous synchronization (IORING_OP_FSYNC).

- A pool of pre-allocated and registered buffers for incoming logs.

- Batching of

IORING_OP_WRITE operations to write multiple log blocks at once.

- Usage of

IORING_OP_FSYNC for asynchronous forced writing of data to disk at specific intervals or after a certain volume of data has accumulated.

Results:

- Write Throughput: Increased by 50-70%, allowing the system to handle peak loads without data loss.

- Write Latency (P99): Decreased by 20-30%, ensuring more stable operation.

- CPU Utilization: CPU overhead for write operations significantly reduced, freeing up resources for log processing and filtering.

- Reliability: The system became more resilient to peak I/O loads, reducing instances of "backpressure" and data loss.

This example shows that io_uring is useful not only for databases but also for any systems requiring efficient and reliable writing of large volumes of data to disk.

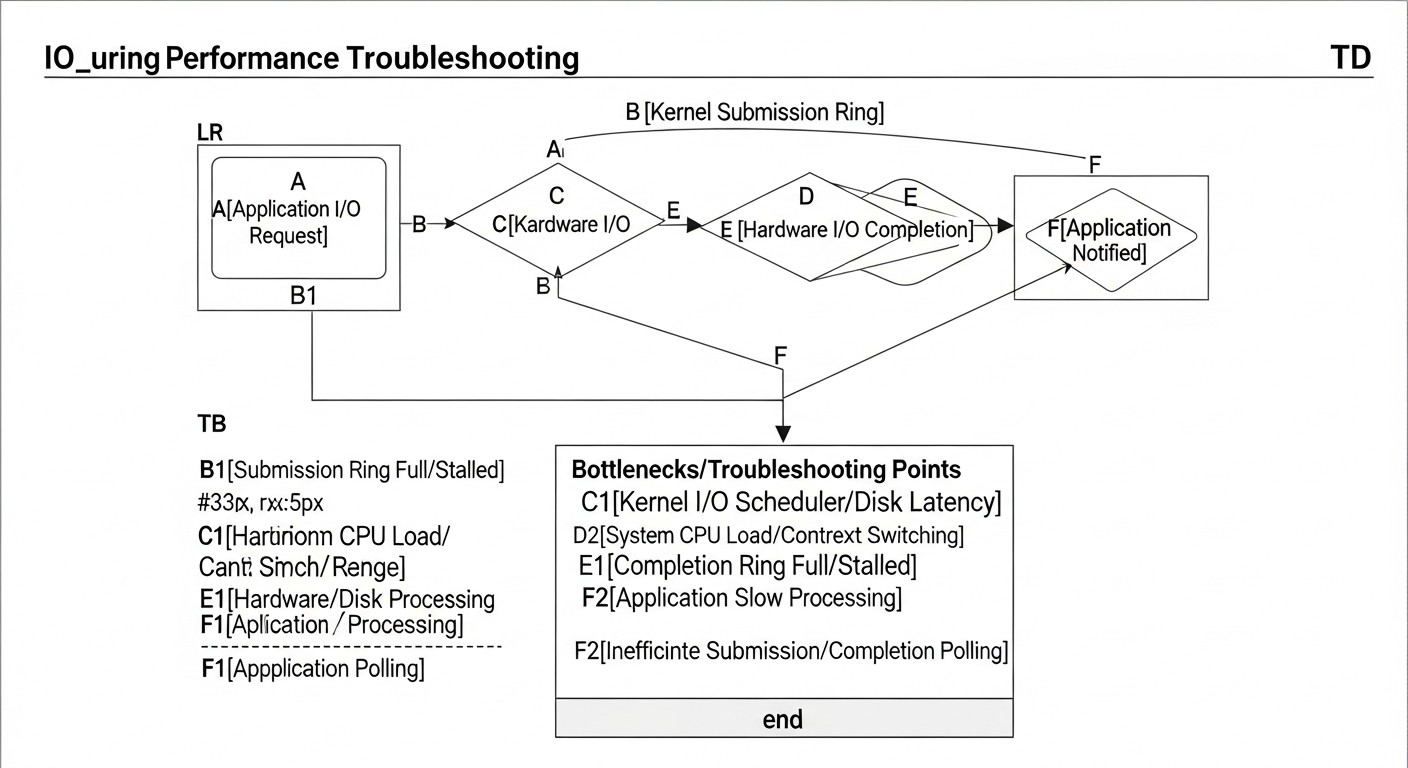

11. Troubleshooting: Troubleshooting io_uring Issues

Diagram: 11. Troubleshooting: Troubleshooting io_uring Issues

Diagram: 11. Troubleshooting: Troubleshooting io_uring Issues

Despite its power, io_uring can be a source of complex problems requiring a deep understanding of the system. Here are typical issues and approaches to solving them.

11.1. io_uring Initialization Issues

Symptoms: io_uring_queue_init() or io_uring_queue_init_params() returns a negative value (error code).

- Error

-EPERM (Operation not permitted) or -EINVAL (Invalid argument):

- Cause 1: Too old Linux kernel version. io_uring requires kernel 5.1+, and for full functionality 5.10+ (optimally 6.x).

- Solution: Update the kernel.

- Cause 2: Attempting to use initialization flags (e.g.,

IORING_SETUP_SQPOLL) that are not supported by your kernel version or are incompatible with the current configuration.

- Solution: Check the kernel documentation for your version. Remove flags one by one to identify the problematic one.

- Cause 3: Insufficient permissions. Although io_uring typically does not require root privileges, some flags or operations may be restricted.

- Solution: Try running the application with

sudo for diagnosis. If this works, you may need to configure capabilities or use flags that do not require elevated privileges.

- Error

-ENOMEM (Out of memory):

- Cause: Too large queue depth (

queue_depth) or the system is experiencing memory shortage.

- Solution: Reduce

queue_depth. Check available memory in the system.

11.2. io_uring Operations Hang or Do Not Complete

Symptoms: Requests are sent to the SQ, but the corresponding CQEs never appear. The application blocks or hangs.

- Cause 1: Insufficient or missing call to

io_uring_submit() (or io_uring_enter()). The kernel does not know that new requests have appeared in the SQ.

- Solution: Ensure that you regularly call

io_uring_submit() after adding SQEs. If you are using IORING_SETUP_SQPOLL, ensure that the SQPOLL thread is active and not blocked.

- Cause 2: Errors in SQE (incorrect file descriptors, buffers, offsets). The kernel may silently ignore incorrect requests or return errors that you are not handling.

- Solution: Carefully check the parameters of each SQE. Use

io_uring_sqe_set_data() to bind context and debugging information to each request, so that upon receiving a CQE, you can understand which request is stuck.

- Cause 3: Incorrect management of buffers or file descriptors (see section "Common Errors").

- Solution: Ensure that buffers and FDs remain valid throughout the operation. Use registered buffers/FDs.

- Cause 4: Kernel/driver level hang or block.

- Solution: Check

dmesg for kernel errors. Use bpftrace to trace io_uring system calls and related kernel functions to see where the delay occurs.

11.3. Poor Performance or High CPU Utilization

Symptoms: The application uses io_uring, but performance does not improve or even deteriorates compared to previous methods. CPU utilization remains high.

- Cause 1: Lack of operation batching. Too frequent calls to

io_uring_submit().

- Solution: Implement efficient batching (see section "Practical Tips").

- Cause 2: Not using registered buffers/file descriptors. The kernel spends time checking and copying data.

- Solution: Register buffers and FDs if possible for your scenario.

- Cause 3: Incorrect use of

IORING_SETUP_SQPOLL. For example, if the load is low, the SQPOLL thread may needlessly consume a CPU core.

- Solution: Disable SQPOLL for low-load scenarios. Ensure that the SQPOLL thread is bound to an isolated CPU core to minimize impact on other processes.

- Cause 4: Inefficient completion processing loop (CQ). For example, a blocking call to

io_uring_wait_cqe() when many events are expected, or too frequent non-blocking polls.

- Solution: Optimize the CQ processing loop. Use

io_uring_for_each_cqe() to process a batch of events.

- Cause 5: The problem is not I/O. io_uring optimizes I/O, but if the bottleneck is elsewhere (e.g., CPU-intensive computations, mutex locks, inefficient algorithms), then io_uring will not help.

- Solution: Perform full application profiling using

perf or bpftrace to identify the true bottleneck.

11.4. Memory Leaks

Symptoms: Application memory consumption continuously increases.

- Cause: Incorrect management of memory buffers or request contexts after operations complete.

- Solution: Ensure that each buffer or request structure allocated for an SQE is freed or reused exactly once after receiving the corresponding CQE. Use tools like Valgrind to detect leaks.

11.5. Diagnostic Commands

dmesg: Check kernel logs for errors related to io_uring or the disk subsystem./proc/sys/fs/io_uring/: This directory contains files with information about currently active io_uring contexts, their parameters, and statistics. For example, /proc/sys/fs/io_uring/max_files or /proc/sys/fs/io_uring/max_sq_entries.bpftrace: Create your own scripts to trace specific kernel functions related to io_uring and analyze their behavior.perf: Profile the application and kernel to identify CPU hotspots.strace -e trace=io_uring ./app: Helps to see io_uring_setup and io_uring_enter system calls, but not internal operations.

11.6. When to Contact Support or the Community

If you have exhausted all self-diagnosis options and are confident that the problem is related to the Linux kernel or io_uring specifics, do not hesitate to contact:

- Linux Kernel Mailing List (LKML): For reporting potential kernel bugs or requesting new io_uring features.

- GitHub repository

liburing: For questions related to library usage.

- Specialized forums and communities: Stack Overflow, Reddit (r/linux, r/kernel, r/programming), or communities for your programming language.

Provide as much detailed information as possible: kernel version, code, reproduction steps, error logs, benchmark results, and profiling data.

12. FAQ: Frequently Asked Questions about io_uring

1. Is io_uring always faster than traditional I/O methods?

Answer: No, not always. io_uring is designed for high-load, asynchronous I/O-intensive tasks, where it significantly reduces the overhead of system calls and context switches. For simple, low-load, or predominantly CPU-intensive applications, the overhead of io_uring's more complex API might outweigh the potential benefits, and traditional blocking calls or epoll might be more suitable or even slightly faster due to their simplicity.

2. What is the minimum Linux kernel version required for io_uring?

Answer: io_uring was introduced in Linux kernel 5.1. However, for full utilization of all features, including network operations and numerous optimizations, using Linux kernel 5.10 or newer is strongly recommended. The optimal choice for a production environment in 2026 is the 6.x LTS series kernels, which provide maximum stability and performance.

3. Is io_uring intended only for disk I/O or for network I/O as well?

Answer: io_uring unifies both types of I/O. It provides an asynchronous interface for both disk and file operations (read, write, fsync, openat) and network operations (accept, connect, recvmsg, sendmsg). This is one of its key advantages, allowing the creation of high-performance systems that handle all types of I/O under a single API.

4. How difficult is it to learn and use io_uring?

Answer: io_uring has a rather steep learning curve. Its API is low-level and requires a deep understanding of Linux kernel internals, memory management, and asynchronous programming. It is significantly more complex than epoll or libaio. However, using the user-space library liburing greatly simplifies the process by abstracting away many low-level details.

5. Can I use io_uring with high-level languages like Python, Node.js, Go, or Rust?

Answer: Yes, it is possible. For Go and Rust, there are sufficiently mature bindings and libraries that integrate io_uring into their asynchronous runtimes (e.g., io-uring for Rust/Tokio). For Python and Node.js, experimental bindings exist, typically using FFI (Foreign Function Interface) to interact with liburing. However, FFI overhead can diminish some of io_uring's benefits, and its full potential is realized in C/C++.

6. Is epoll obsolete now that io_uring exists?

Answer: No, epoll is not obsolete. It remains an effective and widely used mechanism for event-driven network I/O. io_uring is a more powerful and versatile replacement, especially for scenarios requiring maximum performance and unification of disk and network I/O. For many applications where epoll already performs well, switching to io_uring might be unnecessary, given its complexity.

7. Does io_uring support zero-copy I/O?

Answer: Yes, io_uring is designed with zero-copy optimizations in mind. By registering memory buffers (io_uring_register_buffers), the kernel can perform I/O operations directly into these buffers, bypassing data copying between kernel space and user space. Additionally, operations like IORING_OP_SPLICE allow direct data transfer between file descriptors (e.g., sockets), also without copying to a user buffer.

8. What is SQPOLL and when should it be used?

Answer: SQPOLL (Submission Queue Polling) is an optional io_uring mode, activated by the IORING_SETUP_SQPOLL flag during initialization. In this mode, the kernel creates a dedicated thread that continuously polls the Submission Queue for new requests. This completely eliminates the need for the io_uring_enter() system call to submit requests, reducing overhead to an absolute minimum. It should only be used in extremely high-load scenarios where every system call is critical, as SQPOLL consumes one CPU core.

9. In which cases should io_uring not be used?

Answer: io_uring is not recommended for:

- Low-load applications where I/O is not a bottleneck.

- Projects with tight deadlines and limited development resources.

- Applications where the primary bottleneck is in CPU-intensive computations, not I/O.

- Systems running on very old Linux kernel versions without the possibility of upgrading.

In these cases, the complexity of io_uring might cause more problems than benefits.

10. How does io_uring relate to DPDK?

Answer: io_uring and DPDK (Data Plane Development Kit) address similar I/O performance challenges, but at different levels and with different approaches. io_uring is a Linux kernel interface that provides asynchronous I/O with minimal overhead, while remaining within the standard kernel's network and disk subsystems. DPDK, in turn, is a user-space framework that "bypasses" the Linux kernel, directly managing network cards and processing packets in user space. DPDK provides even lower latencies and higher throughput for network tasks but requires specialized hardware, a more complex architecture, and does not support disk I/O. io_uring is more versatile and integrated into the kernel, whereas DPDK is a niche solution for the most demanding network tasks.

13. Conclusion

io_uring is not just another update in the Linux kernel; it's a fundamental shift in the asynchronous I/O paradigm, which by 2026 is becoming the de facto standard for high-load applications. It provides an unprecedented level of control and efficiency, allowing applications to achieve millions of I/O operations per second with minimal CPU utilization.

We have examined how io_uring addresses the pain points of traditional I/O methods by unifying disk, file, and network operations under a single, highly efficient API. Its key features — ring buffers, minimization of system calls, SQPOLL support, and resource registration — pave the way for creating systems with extremely low latencies and colossal throughput.

Economic analysis has shown that, despite a higher initial development cost due to API complexity, io_uring provides significant long-term savings. Reduced hardware resource requirements directly translate into lower cloud costs and increased product competitiveness in the market. Real-world case studies confirm these findings, demonstrating impressive performance gains in databases, proxy servers, and logging systems.

However, like any powerful tool, io_uring requires deep understanding and caution. Typical mistakes, such as lack of batching, improper buffer management, or ignoring the kernel version, can negate all its advantages. Using the liburing library, thorough testing, monitoring with perf and bpftrace, and adhering to best practices are key to successful implementation.

Final Recommendations:

- Assess your workload: If your application experiences I/O bottlenecks and has high latency and throughput requirements, io_uring is your #1 candidate.

- Update your kernel: Ensure your system is running on Linux 5.10+ (ideally 6.x LTS).

- Use

liburing: Start with this library to simplify development.

- Batch operations: This is critically important for io_uring performance.

- Register resources: For maximum efficiency, use registered buffers and file descriptors.

- Test and monitor thoroughly: Measure real metrics and profile your application.

Next steps for the reader:

If you have read this far, it means you are ready for a deep dive into io_uring. Your next steps should include:

- Study

iouring-by-example: Work through the examples to gain practical experience.

- Experiment with

fio: Benchmark your current system with fio, then try using the io_uring engine.

- Pilot project: Start with a small but I/O-intensive component of your application to assess the complexity and potential benefits in your environment.

- Deep dive into documentation: Regularly consult the official Linux kernel documentation and articles by Jens Axboe.

In a world where data is the new oil and speed is currency, io_uring provides you with the tools to create truly competitive and efficient high-load applications. Master it, and you will unlock new horizons of performance for your projects in 2026 and beyond.

¿Te fue útil esta guía?

I/O performance optimization on Linux with io_uring for high-load applications