info

¿Necesitas un servidor para esta guía? Ofrecemos servidores dedicados y VPS en más de 50 países con configuración instantánea.

¿Necesitas un VPS para esta guía?

Explore otras opciones de servidores dedicados en

Web Application Performance Optimization: Caching Strategies with Redis and Varnish on VPS/Dedicated

TL;DR: Executive Summary

- Two-tier caching – key to success in 2026: The combination of Varnish (HTTP cache, "close" to the user) and Redis (object/data cache, "close" to the application) ensures maximum performance and fault tolerance for web applications.

- Varnish Cache for HTTP traffic: Ideal for caching full HTTP responses, static, and frequently requested dynamic content. Significantly reduces backend load and speeds up content delivery, especially on VPS/Dedicated servers.

- Redis for object and session caching: High-performance in-memory data store, indispensable for caching database query results, user sessions, temporary data, and implementing counters/queues.

- Flexible cache invalidation – critically important: Effective invalidation strategies (Soft Purge for Varnish, TTL and Pub/Sub for Redis) prevent the delivery of stale data and maintain consistency.

- Monitoring and optimization – a continuous process: Using tools like Prometheus, Grafana, Redis CLI, and Varnishlog allows tracking performance metrics, identifying bottlenecks, and configuring settings for maximum efficiency.

- Resource saving and scaling: Proper caching reduces the need for more powerful servers, delaying costly upgrades and simplifying horizontal backend scaling.

- Security and fault tolerance: It's important not only to accelerate but also to protect. Correct configuration of Varnish as a reverse proxy, as well as Redis clustering with Sentinel, increases reliability and resilience to attacks.

3. Introduction

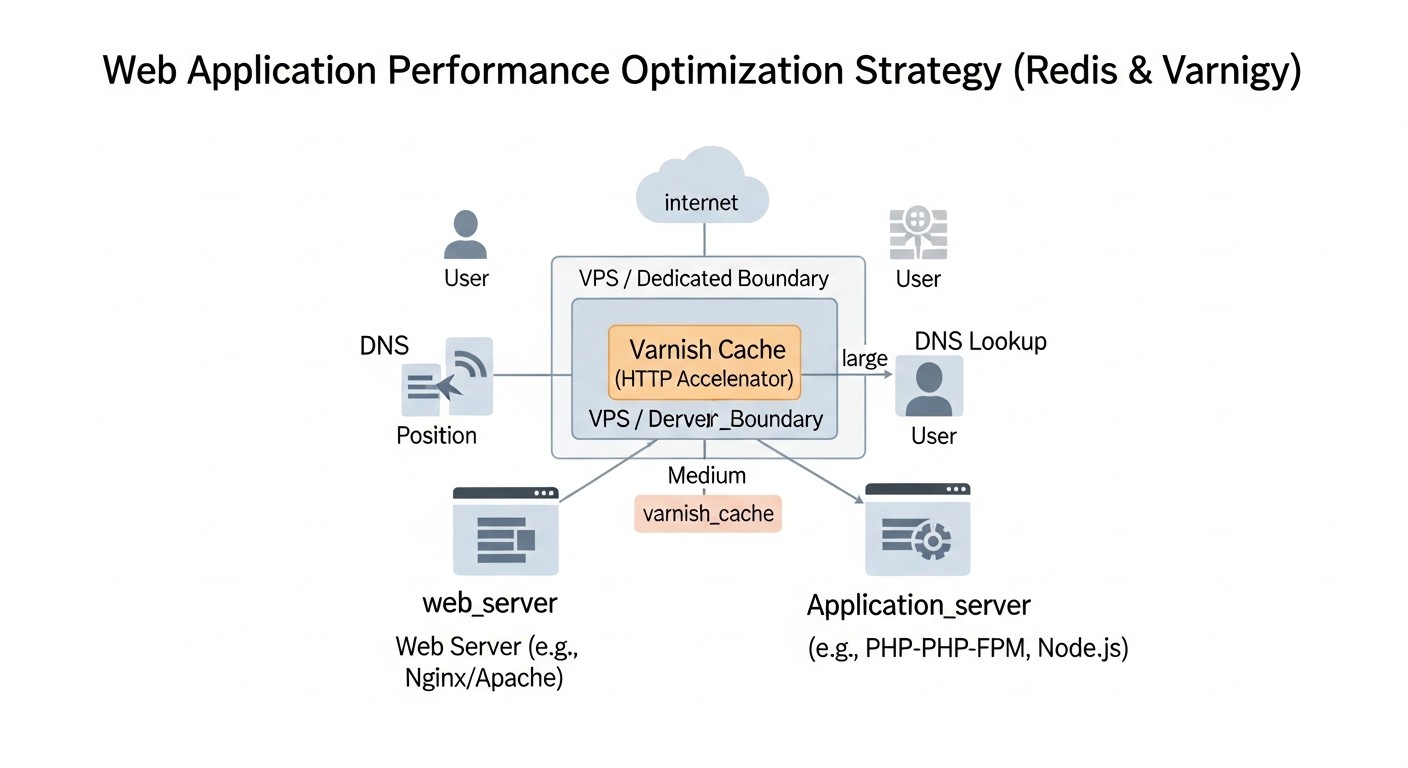

Diagram: 3. Introduction

Diagram: 3. Introduction

In the rapidly evolving world of web applications, where every millisecond of delay can result in the loss of a user or client, performance is no longer just a desirable feature but a critically important requirement. By 2026, users expect instant responses from any service, be it a SaaS platform, an online store, or a corporate portal. Slowly loading pages not only degrade the user experience but also negatively impact SEO rankings, conversion rates, and consequently, business revenue.

This problem is particularly relevant for projects deployed on VPS (Virtual Private Server) or Dedicated servers, where resources, though limited, are amenable to fine-tuning and optimization. Unlike serverless or fully managed cloud solutions, VPS/Dedicated provide full control over the infrastructure, which opens up vast opportunities for manual optimization but also imposes responsibility for the efficient use of every gigabyte of RAM and every CPU core.

This article aims to be a comprehensive guide to implementing and optimizing caching strategies using two powerful tools: Redis and Varnish Cache. We will explore how their synergy allows achieving phenomenal results in the speed and stability of web applications. The target audience for this guide includes experienced DevOps engineers, backend developers (regardless of the stack used: Python, Node.js, Go, PHP), SaaS project founders, system administrators, and technical directors of startups who strive to get the most out of their infrastructure and ensure the uninterrupted operation of their services.

We will delve into practical aspects, provide specific configuration examples, discuss common mistakes, and offer effective solutions. Our goal is to provide you not just with theoretical knowledge, but also a set of tools and recommendations that can be immediately applied to improve the performance of your web applications in the context of 2026.

4. Key Optimization Criteria/Factors for Performance

Diagram: 4. Key Optimization Criteria/Factors for Performance

Diagram: 4. Key Optimization Criteria/Factors for Performance

Before diving into the details of caching implementation, it is essential to clearly understand which metrics and factors determine web application performance and how to evaluate them. Effective optimization always begins with measurement and analysis.

4.1. Response Time (Latency)

Why it's important: This is the time elapsed from when a user sends a request until the first byte of the response is received. High latency directly correlates with a poor user experience and a high bounce rate. Google and other search engines consider response time an important ranking factor.

How to evaluate:

- TTFB (Time To First Byte): Measured by browser developer tools, Lighthouse, WebPageTest.

- Server Response Time: Metrics from APM (Application Performance Monitoring) systems like New Relic, Datadog, Prometheus.

- Ping/Traceroute: For assessing network latency between the client and the server.

The goal is to minimize TTFB, ideally to 100-200 ms for most requests, and 10-50 ms for cached ones.

4.2. Throughput

Why it's important: The number of requests a server can process per unit of time (e.g., RPS - requests per second). High throughput allows the application to handle a large number of concurrent users without performance degradation.

How to evaluate:

- RPS/QPS (Requests/Queries Per Second): Monitoring web server logs (Nginx, Apache), metrics from APM.

- Concurrent Connections: The number of active connections handled by the server.

- Load Testing: Using tools like Apache JMeter, k6, Locust to simulate load and determine maximum throughput.

It's important not only to consider the absolute RPS value but also its stability as the load increases.

4.3. Cache Hit Ratio

Why it's important: The percentage of requests served from the cache without querying the backend or database. The higher this metric, the lower the load on core computing resources and the faster the responses.

How to evaluate:

- Varnishlog/Varnishstat: Provide detailed statistics on hits and misses for the HTTP cache.

- Redis INFO: The

keyspace_hits and keyspace_misses metrics for Redis.

- Custom Application Metrics: Implementing a custom hit/miss counter for the object cache within the application.

For Varnish, it's desirable to achieve a 90%+ hit ratio for cacheable content. For Redis, 70% and above, depending on the data type.

4.4. Server Resource Utilization

Why it's important: Efficient use of CPU, RAM, disk I/O, and network traffic. High utilization without apparent reasons indicates bottlenecks. Low utilization might mean excessive resources or inefficient code.

How to evaluate:

- CPU Usage:

top, htop, Prometheus/Grafana. Ideally, average CPU utilization should be within 60-80% under peak load, leaving headroom for spikes.

- Memory Usage:

free -h, Prometheus/Grafana. It's important to monitor not only overall consumption but also caches (Varnish, Redis) and application memory usage.

- Disk I/O:

iostat, Prometheus/Grafana. High disk activity often indicates inefficient database queries or a lack of caching.

- Network I/O:

iftop, Prometheus/Grafana. Monitoring inbound/outbound traffic to identify anomalies and assess throughput.

Optimal resource utilization allows you to use your VPS or Dedicated server most efficiently, delaying the need for costly upgrades.

4.5. Scalability

Why it's important: The system's ability to handle increasing load by adding resources (vertical scaling) or new instances (horizontal scaling). Proper caching significantly simplifies horizontal backend scaling.

How to evaluate:

- Response Time vs. Load: How response time changes as the number of users increases. Ideally, it remains stable.

- Resource Utilization vs. Load: Linear growth in resource utilization with a linear increase in load indicates good scalability.

- Cost-effectiveness of Scaling: How much it costs to process an additional user/request.

The goal is to design the system so that adding a new backend server or Redis instance leads to a proportional increase in throughput.

Understanding these criteria is fundamental for making informed optimization decisions and allows for accurate measurement of the impact of implemented changes.

5. Comparison Table: Redis vs. Varnish (as of 2026)

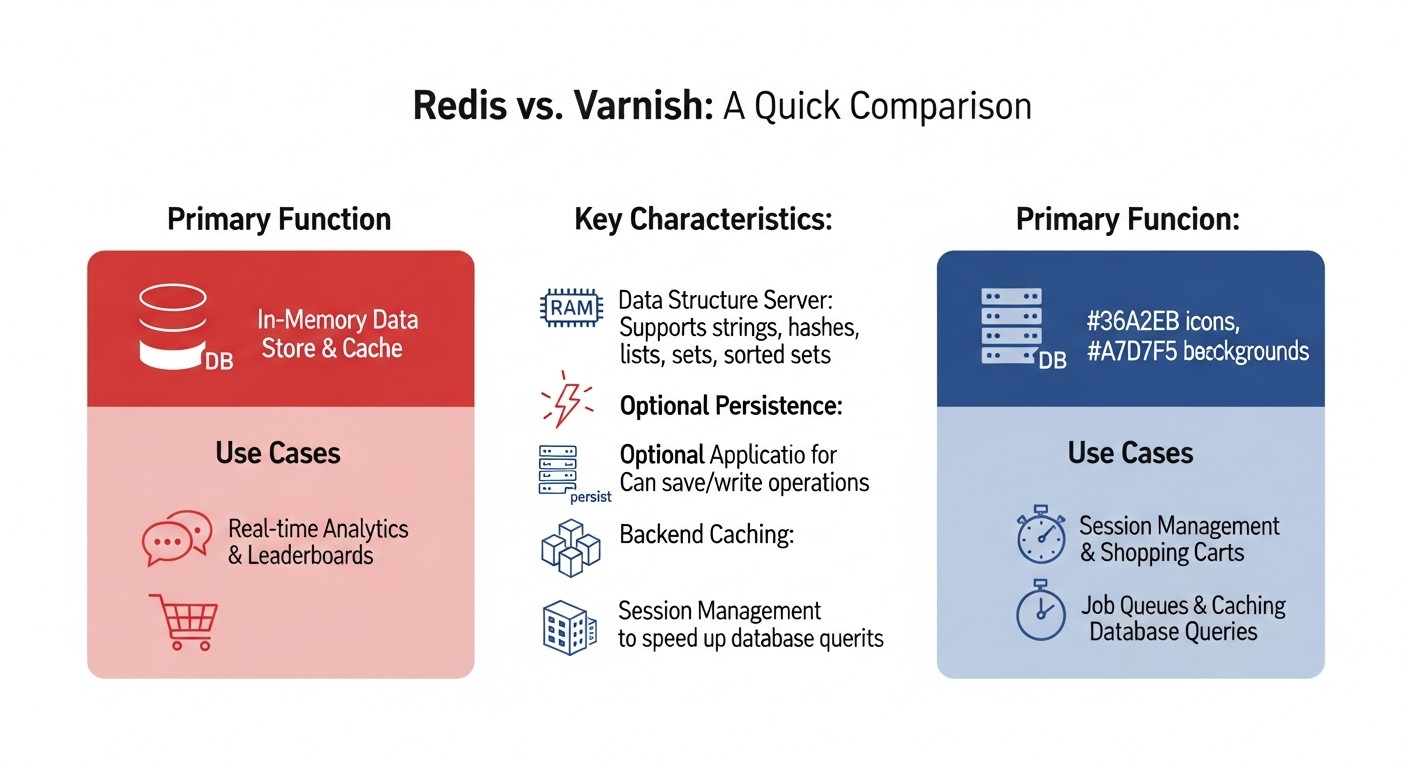

Diagram: 5. Comparison Table: Redis vs. Varnish (as of 2026)

Diagram: 5. Comparison Table: Redis vs. Varnish (as of 2026)

While Redis and Varnish are often used together, they solve different problems in the web application performance stack. This table will help you understand their main differences and areas of application.

| Criterion |

Redis |

Varnish Cache |

Comments (Relevant for 2026) |

| Main Purpose |

In-memory data store (key-value, data structures) |

HTTP accelerator / Reverse proxy with caching |

Redis is a backend cache, Varnish is a frontend cache. Synergy provides maximum effect. |

| Type of Cached Data |

DB query results, sessions, tokens, counters, queues, metadata, temporary objects |

Full HTTP responses (pages, API responses), static files (CSS, JS, images) |

Redis works with "raw" data, Varnish with ready HTTP objects. |

| Position in the Stack |

Between the application and the database |

Between the client (browser) and the web server/application |

Varnish is usually located in front of Nginx/Apache, Redis is accessible from the application code. |

| Protocol |

Proprietary protocol (RESP), TCP |

HTTP/1.1, HTTP/2 (via proxy, e.g., Nginx) |

Varnish natively works with HTTP. Redis works with binary data via its own protocol. |

| Data Persistence |

Optional (RDB snapshots, AOF log) |

Non-persistent, cache is cleared on restart |

Redis can be used as a database, Varnish only as temporary storage. |

| SSL/TLS Support |

Does not support directly (requires stunnel or proxy) |

Does not support directly (requires Nginx/HAProxy in front of Varnish) |

By 2026, this is standard practice: SSL termination occurs on Nginx/HAProxy, then traffic goes to Varnish over HTTP. |

| Scaling |

Cluster, Sentinel (for high availability), sharding |

Horizontal (multiple instances behind a load balancer), ESI for partial caching |

Both scale well, but in different ways. Redis for data, Varnish for traffic. |

| Configuration Language / API |

CLI, client libraries for programming languages |

VCL (Varnish Configuration Language) |

VCL allows for very flexible configuration of caching and request processing rules. |

| Resource Requirements |

RAM (primary consumer), CPU (for serialization/deserialization) |

RAM (primary consumer), CPU (for HTTP processing, VCL) |

Both require sufficient RAM, but Varnish can be more CPU-intensive with complex VCL. |

| License / Cost |

BSD (Open Source), commercial cloud services available |

BSD (Open Source), commercial versions with support available |

On VPS/Dedicated, both can be deployed for free. Commercial versions offer extended features and support. |

| Typical Latency |

<1 ms (in-memory) |

~1-5 ms (from cache, depends on network) |

Both provide very low latency, but Redis is closer to the application. |

| Cache Invalidation |

TTL, DEL/FLUSH commands, Pub/Sub |

PURGE/BAN requests, TTL |

Varnish PURGE/BAN is usually "heavier" to implement than Redis DEL. |

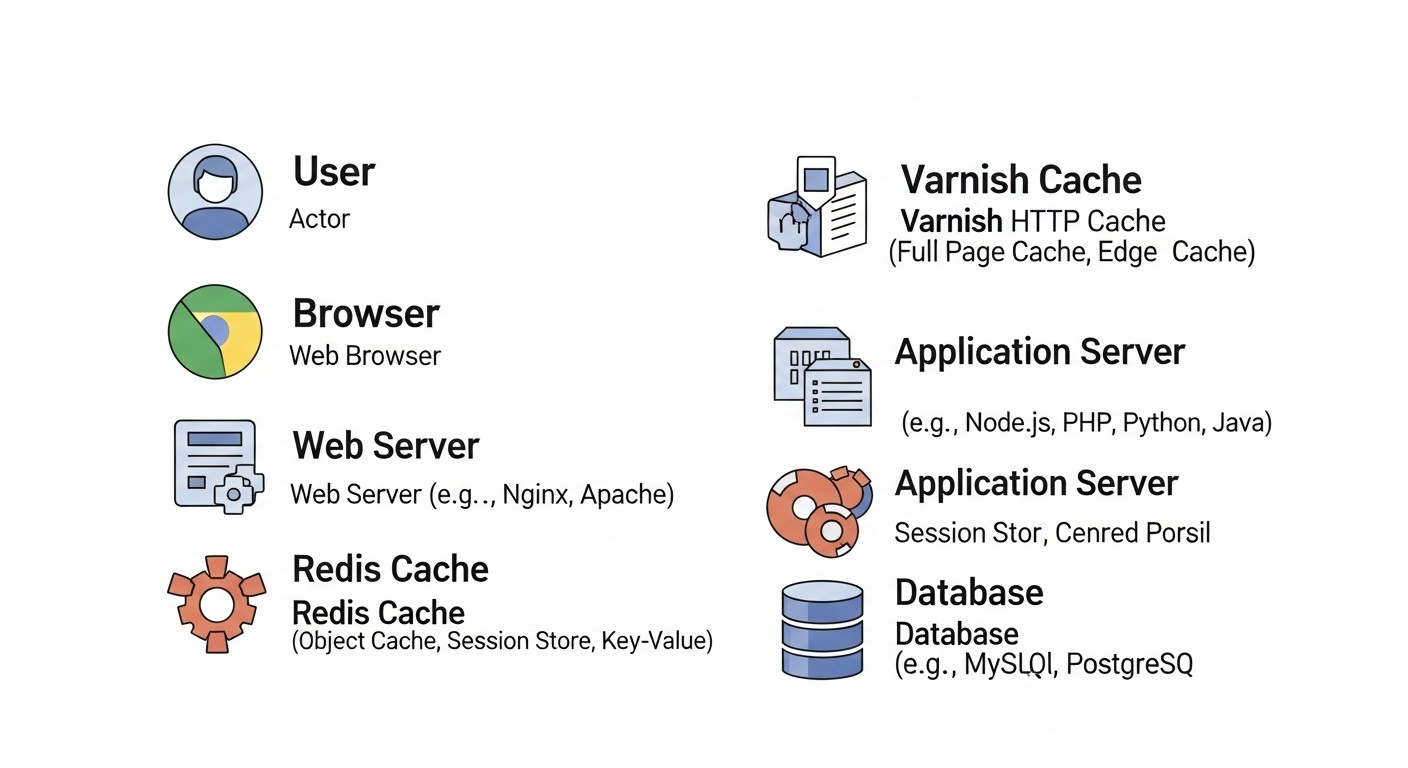

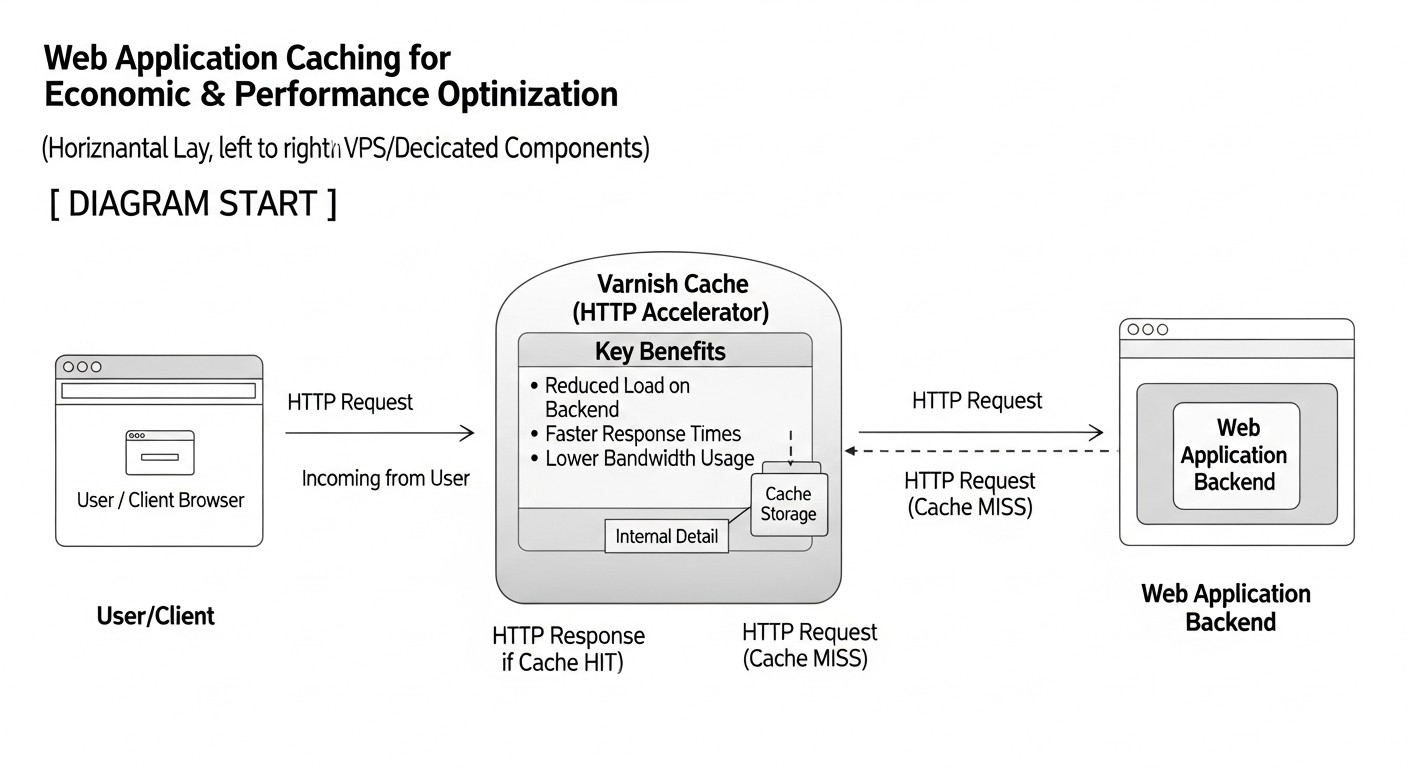

6. Detailed Overview of Redis and Varnish

Diagram: 6. Detailed Overview of Redis and Varnish

Diagram: 6. Detailed Overview of Redis and Varnish

To create a high-performance web application on VPS/Dedicated servers, simply installing Redis and Varnish is not enough. A deep understanding of their architecture, capabilities, and application areas is necessary to effectively integrate them into your infrastructure.

6.1. Redis: In-Memory Data Store

Redis (Remote Dictionary Server) is a high-performance, open-source, in-memory data store often referred to as "data structures on steroids." It supports a variety of abstract data types such as strings, hashes, lists, sets, sorted sets with range queries, bitmaps, HyperLogLogs, geospatial indexes, and streams. This makes Redis an incredibly versatile tool for many tasks beyond simple caching.

6.1.1. Advantages of Redis

- Incredible Speed: Since Redis stores data in RAM, read and write operations are performed in milliseconds. This is critical for high-load systems where every database query can become a bottleneck.

- Data Type Flexibility: Unlike simple key-value systems, Redis allows storing complex data structures, which simplifies development and optimizes data handling. For example, hashes are ideal for caching user profiles, and sorted sets for rankings or activity feeds.

- Atomic Operations: All operations in Redis are atomic, which guarantees data integrity during concurrent access from multiple clients, without the need for complex locking mechanisms on the application side.

- Persistence (Optional): Redis can save data to disk (RDB snapshots or AOF log), which allows restoring the cache state after a server restart, turning it into a full-fledged NoSQL database.

- Publish/Subscribe (Pub/Sub): Built-in functionality for real-time messaging, useful for cache invalidation, notifications, or creating chats.

- Transactions and Lua Scripts: Allow executing multiple commands as a single unit or implementing complex server-side logic, minimizing network latency.

- Clustering and High Availability: Redis Sentinel provides automatic failover in case of master failure, and Redis Cluster allows horizontally scaling the data store across multiple nodes.

6.1.2. Disadvantages of Redis

- RAM Dependency: The main drawback is that all data must fit into RAM. This can be expensive for very large data volumes, especially on VPS/Dedicated.

- Lack of Built-in Security: Redis is not inherently designed for untrusted networks. It requires careful firewall and authentication configuration.

- Complexity of Cluster Setup: While Redis Cluster is powerful, its setup and management can be complex for beginners.

6.1.3. Examples of Redis Usage in 2026

- Caching DB Query Results: The most common scenario. Instead of repeatedly querying PostgreSQL or MySQL, query results are cached in Redis.

- Storing User Sessions: Especially relevant for distributed applications where sessions must be accessible from any backend instance.

- Counters and Rankings: High-performance increments/decrements, sorted lists for leaderboards.

- Task Queues: Implementing background tasks using Redis lists (e.g., with Celery for Python, BullMQ for Node.js).

- Full-Text Search (RedisSearch): With the RedisSearch module, you can build fast full-text search directly in Redis.

- Rate Limiting: Limiting the number of requests from a user/IP within a specific period.

6.2. Varnish Cache: High-Performance HTTP Accelerator

Varnish Cache is an open-source HTTP accelerator, or reverse proxy server, designed specifically for caching HTTP responses. It sits between the client and your web server/application (Nginx/Apache/Gunicorn/PM2), intercepts all incoming HTTP requests, and if a response for a given request is already in the cache, it delivers it instantly without contacting the backend. This significantly reduces the load on the application server and database, and also shortens the response time for the end-user.

6.2.1. Advantages of Varnish

- Phenomenal Speed: Varnish operates entirely in RAM, allowing it to deliver cached responses with minimal latency. It is optimized for handling a huge number of concurrent connections.

- Powerful VCL (Varnish Configuration Language): VCL is a domain-specific language that allows writing very flexible rules for processing HTTP requests and responses. You can configure caching rules, routing, header modification, load balancing, and much more.

- ESI (Edge Side Includes): Allows caching parts of pages independently. For example, you can cache most of a page but leave the user's shopping cart block uncached. Varnish "stitches" these parts together on the fly.

- Graceful Degradation: Varnish can continue to serve stale but still relevant objects from the cache even if the backend is unavailable (e.g., during deployment or failure). This significantly increases fault tolerance.

- HTTP/2 Support (via External Proxy): By 2026, Varnish itself does not directly support HTTP/2, but it works perfectly in conjunction with Nginx or HAProxy, which provide HTTP/2 and SSL termination.

- Reduced Backend Load: Varnish's main function is to absorb the bulk of HTTP traffic, allowing your application servers to focus on processing complex business logic.

6.2.2. Disadvantages of Varnish

- Lack of Built-in SSL/TLS: Varnish cannot directly handle HTTPS traffic. An SSL-terminating proxy (Nginx, HAProxy) must always be placed in front of it. This adds an additional layer to the architecture.

- Non-Persistent Cache: Varnish's cache is entirely stored in RAM and is cleared upon service restart. This means that after a restart, Varnish will have to repopulate its cache.

- Complexity of VCL Configuration: While VCL is very powerful, learning and correctly configuring it for complex scenarios can be a challenge. Incorrect configuration can lead to undesirable effects or incorrect caching.

- Not Suitable for Personal Data: Varnish should not be used for caching sensitive, user-specific information unless complex ESI mechanisms and very strict invalidation are employed.

6.2.3. Examples of Varnish Usage in 2026

- Caching Non-Personalized Pages: Home pages, category pages, blog articles, public API responses.

- Caching Static Files: CSS, JavaScript, images, fonts. While Nginx is also good for this, Varnish can add an extra layer of control.

- Accelerating API Requests: If an API returns data that changes infrequently or is public, Varnish can cache these responses.

- Graceful Degradation: Ensuring site availability even during temporary backend failures.

- Load Distribution: Simple load balancing mechanisms between multiple backends can be implemented using VCL.

The combined use of Redis and Varnish allows for the creation of a multi-layered caching system that efficiently handles both HTTP requests and internal application data, ensuring maximum performance and fault tolerance.

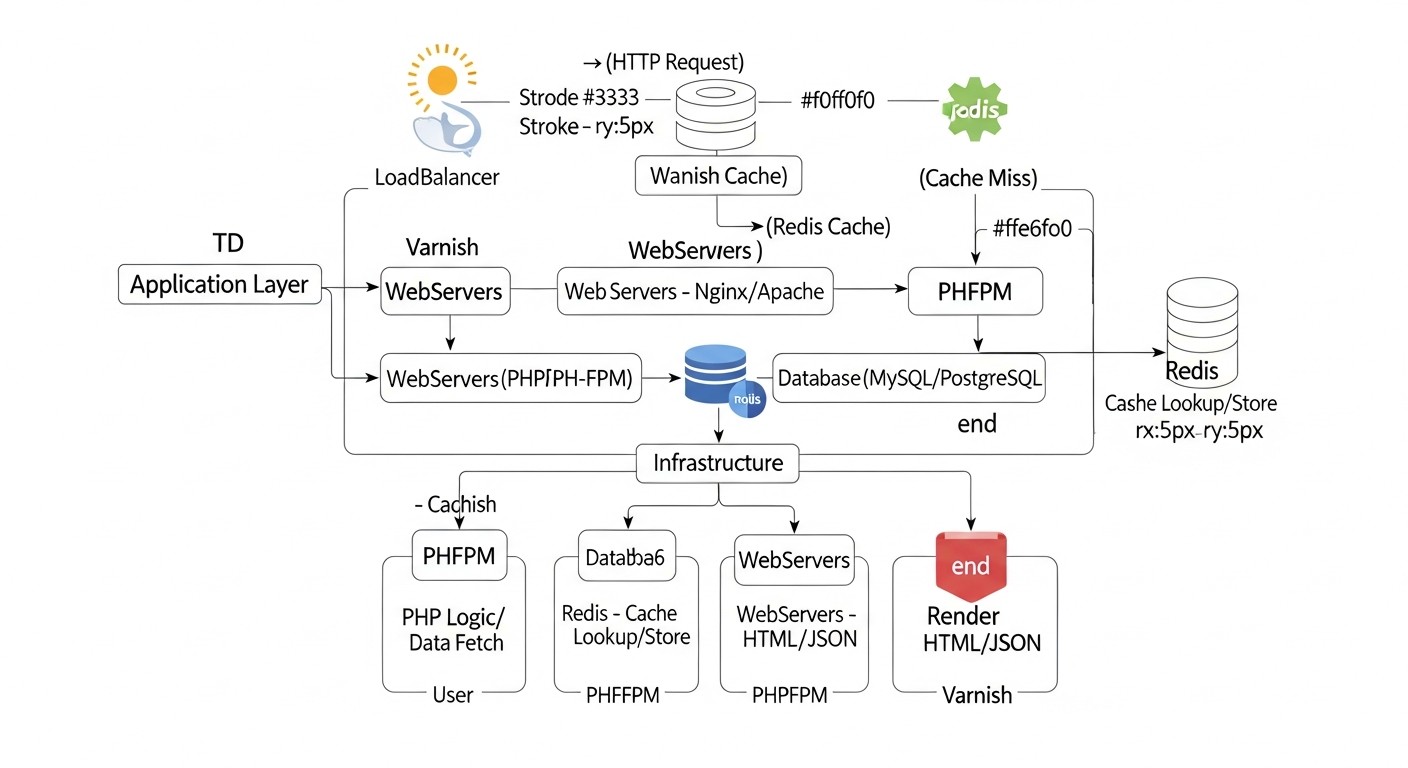

7. Practical Tips and Implementation Recommendations

Diagram: 7. Practical Tips and Implementation Recommendations

Diagram: 7. Practical Tips and Implementation Recommendations

Now that we understand the capabilities of Redis and Varnish, let's move on to specific steps for their implementation and configuration on VPS/Dedicated servers. We will assume you have a basic server with Ubuntu 24.04 (relevant for 2026) and a web application running, for example, via Gunicorn/PM2 behind Nginx.

7.1. Integrating Redis into the Application

Redis should be installed on the same server as your application, or on a dedicated Redis server (for large projects). Let's start by installing it:

sudo apt update

sudo apt install redis-server -y

sudo systemctl enable redis-server

sudo systemctl start redis-server

7.1.1. Basic Redis Configuration

Edit the file /etc/redis/redis.conf. Key parameters:

bind 127.0.0.1 ::1: Redis should only listen on the local interface if it's used solely by the application on the same server. If Redis is on a separate server, specify the IP address accessible to the backend and configure the firewall.protected-mode yes: Keep enabled for security.maxmemory <size>mb: Set a RAM usage limit. For example, maxmemory 2048mb. This is critical for VPS/Dedicated.maxmemory-policy allkeys-lru: Key eviction policy when the memory limit is reached. LRU (Least Recently Used) is a good choice for caching.requirepass <your_strong_password>: Be sure to set a password for production environments.

sudo nano /etc/redis/redis.conf

# After changes, restart Redis

sudo systemctl restart redis-server

7.1.2. Integrating Redis into Application Code

Examples for different languages:

Python (with Flask/Django):

# Library installation

# pip install redis Flask-Caching

import redis

from flask import Flask, request

from flask_caching import Cache

app = Flask(__name__)

app.config['CACHE_TYPE'] = 'redis'

app.config['CACHE_REDIS_HOST'] = 'localhost'

app.config['CACHE_REDIS_PORT'] = 6379

app.config['CACHE_REDIS_DB'] = 0

app.config['CACHE_REDIS_PASSWORD'] = 'your_strong_password' # If set

cache = Cache(app)

@app.route('/api/products')

@cache.cached(timeout=300) # Cache for 5 minutes

def get_products():

# Simulate a long DB query

products = fetch_products_from_database()

return {'products': products}

@app.route('/api/user_session')

def get_user_session():

user_id = request.args.get('user_id')

r = redis.StrictRedis(host='localhost', port=6379, db=0, password='your_strong_password')

session_data = r.get(f'session:{user_id}')

if session_data:

return {'session': session_data.decode('utf-8')}

return {'message': 'Session not found'}

# For cache invalidation

def invalidate_product_cache():

with app.app_context():

cache.delete_memoized(get_products) # Delete cache of a specific function

Node.js (with Express):

// Library installation

// npm install redis connect-redis express-session

const express = require('express');

const redis = require('redis');

const session = require('express-session');

const connectRedis = require('connect-redis');

const app = express();

const RedisStore = connectRedis(session);

const redisClient = redis.createClient({

host: 'localhost',

port: 6379,

password: 'your_strong_password' // If set

});

redisClient.on('error', (err) => console.log('Redis Client Error', err));

app.use(session({

store: new RedisStore({ client: redisClient }),

secret: 'super_secret_key_2026',

resave: false,

saveUninitialized: false,

cookie: { secure: false, maxAge: 86400000 } // 24 hours

}));

app.get('/api/data', (req, res) => {

// Check cache before querying DB

redisClient.get('cached_data_key', async (err, data) => {

if (data) {

console.log('Serving from Redis cache');

return res.send(JSON.parse(data));

}

console.log('Serving from database');

const dbData = await fetchDataFromDatabase(); // Long operation

redisClient.setex('cached_data_key', 300, JSON.stringify(dbData)); // Cache for 5 minutes

res.send(dbData);

});

});

7.1.3. Redis Cache Invalidation Strategies

- TTL (Time To Live): The simplest method. When writing data to Redis, specify the key's time-to-live (

SETEX).

- Proactive Invalidation: When data changes in the database, send a

DEL command to Redis for the corresponding keys.

- Pub/Sub: Use Redis Pub/Sub to notify all application instances about the need to invalidate the cache, for example, when global settings change.

- Cache Tagging: If data depends on multiple entities, store a list of keys in Redis associated with each entity, and when an entity changes, delete all related keys.

7.2. Varnish Cache Configuration

Varnish will operate in front of Nginx. Nginx will terminate SSL and proxy requests to Varnish over HTTP, and Varnish, in turn, will proxy them to your backend (Gunicorn/PM2).

7.2.1. Varnish Installation

sudo apt install varnish -y

sudo systemctl enable varnish

sudo systemctl start varnish

7.2.2. Nginx Configuration for Varnish

Your Nginx should listen on port 443 (HTTPS) and proxy requests to the Varnish port (default 6081). Varnish will listen on port 80 (HTTP).

Example Nginx configuration (/etc/nginx/sites-available/your_app.conf):

server {

listen 80;

listen 443 ssl http2;

server_name your_domain.com www.your_domain.com;

ssl_certificate /etc/letsencrypt/live/your_domain.com/fullchain.pem;

ssl_certificate_key /etc/letsencrypt/live/your_domain.com/privkey.pem;

ssl_session_cache shared:SSL:10m;

ssl_session_timeout 10m;

ssl_protocols TLSv1.2 TLSv1.3;

ssl_ciphers "ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256:ECDHE-ECDSA-AES256-GCM-SHA384:ECDHE-RSA-AES256-GCM-SHA384:DHE-RSA-AES128-GCM-SHA256:DHE-RSA-AES256-GCM-SHA384";

ssl_prefer_server_ciphers on;

# Proxy all requests to Varnish, which listens on 127.0.0.1:80

# Varnish will expect HTTP traffic

location / {

proxy_pass http://127.0.0.1:80; # Varnish will listen on port 80

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

proxy_set_header X-Forwarded-Port $server_port;

proxy_set_header Connection ""; # Important for HTTP/1.1

}

# For static files that should not be cached by Varnish (or are cached by Nginx)

# location ~ \.(jpg|jpeg|gif|png|webp|ico|css|js)$ {

# expires 30d;

# add_header Cache-Control "public, no-transform";

# try_files $uri @backend; # If static file not found, pass to backend

# }

}

sudo systemctl restart nginx

7.2.3. Varnish Configuration (/etc/varnish/default.vcl)

Varnish by default listens on port 6081. We will change it to 80 so Nginx can proxy requests to it.

Edit /etc/default/varnish or /etc/systemd/system/varnish.service.d/override.conf (preferred) and change the port:

# For Ubuntu 24.04, you likely need to edit the systemd unit

sudo systemctl edit varnish

# Insert:

# [Service]

# ExecStart=

# ExecStart=/usr/sbin/varnishd -a :80 -F -a localhost:8443,HTTP/2 -T localhost:6082 -f /etc/varnish/default.vcl -S /etc/varnish/secret -s malloc,2G

# Here -a :80 means Varnish listens on public port 80

# localhost:8443 is your Nginx, which listens on 8443 (or another port)

# -s malloc,2G is 2GB of memory for the cache

Or, if you have the old method via /etc/default/varnish (less likely for 2026):

sudo nano /etc/default/varnish

# Change VARNISH_LISTEN_PORT to 80

# VARNISH_LISTEN_PORT=80

Now the main VCL file: /etc/varnish/default.vcl. This is your primary tool for cache management.

Assume your backend (Gunicorn, PM2, etc.) listens on port 8000.

vcl 4.1;

# Define the backend - your application

backend default {

.host = "127.0.0.1";

.port = "8000";

.probe = {

.url = "/health"; # Endpoint for backend health check

.timeout = 1s;

.interval = 5s;

.window = 5;

.threshold = 3;

}

}

# Handle incoming requests

sub vcl_recv {

# Remove unwanted headers that might interfere with caching

unset req.http.Cookie; # By default, do not cache if Cookie exists

# If the request is PURGE, check IP and perform purge

if (req.method == "PURGE") {

if (!client.ip ~ "127.0.0.1") { # Allow PURGE only from localhost

return (synth(403, "Forbidden"));

}

return (purge);

}

# If the request is BAN, check IP and perform ban

if (req.method == "BAN") {

if (!client.ip ~ "127.0.0.1") {

return (synth(403, "Forbidden"));

}

ban("req.url ~ " + req.url + " && req.http.host == " + req.http.host);

return (synth(200, "Ban added"));

}

# Always pass POST, PUT, DELETE, PATCH

if (req.method != "GET" && req.method != "HEAD") {

return (pass);

}

# Do not cache admin panel

if (req.url ~ "^/admin") {

return (pass);

}

# Pass client IP to backend via X-Forwarded-For

if (req.http.X-Forwarded-For) {

set req.http.X-Forwarded-For = req.http.X-Forwarded-For + ", " + client.ip;

} else {

set req.http.X-Forwarded-For = client.ip;

}

# Hash the request for caching (so different URLs with query parameters are cached differently)

# You can normalize the URL if query parameters do not affect content

# For example, discard UTM tags

# set req.url = regsub(req.url, "\?utm_.", "");

return (hash);

}

# Handle responses from the backend

sub vcl_backend_response {

# If the backend set a Cookie, we usually don't cache

if (beresp.http.Set-Cookie) {

return (uncache);

}

# Cache successful responses for 5 minutes

if (beresp.status == 200 || beresp.status == 203 || beresp.status == 301 || beresp.status == 302 || beresp.status == 304 || beresp.status == 307) {

set beresp.ttl = 5m; # Default cache TTL

return (deliver);

}

# Do not cache for errors or other statuses

return (deliver);

}

# Handle responses from Varnish to the client

sub vcl_deliver {

# Add a header to see if the response was from cache

if (obj.hits > 0) {

set resp.http.X-Cache = "HIT";

} else {

set resp.http.X-Cache = "MISS";

}

# Remove internal headers if not needed by the client

unset resp.http.X-Varnish;

unset resp.http.Via;

}

# Handle Varnish errors

sub vcl_synth {

if (resp.status == 403) {

set resp.http.Content-Type = "text/html; charset=utf-8";

synthetic( {"

403 Forbidden

403 Forbidden

Access denied.

"} );

return (deliver);

}

return (deliver);

}

sudo systemctl restart varnish

7.2.4. Varnish Cache Invalidation

7.3. Combined Caching Strategy

The ideal strategy is multi-level caching:

- Browser Cache: HTTP headers

Cache-Control, Expires, ETag for static and rarely changing pages.

- Varnish (Reverse Proxy/HTTP Accelerator): Caching full HTTP responses for non-personalized content and static files.

- Redis (Object Cache): Caching database query results, sessions, and intermediate computations within the application.

- In-Application Cache (in-memory): For very frequently used but rarely changing data (e.g., configuration, reference data).

- DBMS Cache: (e.g., pgBouncer, MySQL query cache, although the latter is often not recommended).

Each caching layer should have its own invalidation strategy and time-to-live, corresponding to the frequency of data changes. For example, data in Redis might have a TTL of 5-30 minutes, while Varnish can cache public pages for 1-2 hours, and static files for days or weeks.

Example Request Flow:

- The client requests

your_domain.com/products.

- Nginx terminates SSL and passes the request to Varnish (port 80).

- Varnish checks its cache:

- HIT: Varnish immediately serves the cached response to the client.

- MISS: Varnish passes the request to the backend (port 8000).

- The application (backend) receives the request:

- Attempts to retrieve data from Redis (e.g., product list).

- If data is in Redis (HIT), it uses them.

- If data is not in Redis (MISS), it queries the DB.

- Caches the data obtained from the DB in Redis and returns the response to Varnish.

- Varnish receives the response from the backend, caches it (if allowed by VCL), and serves it to the client.

This approach ensures maximum performance, reducing the load on each subsequent layer of the stack.

8. Common Caching Mistakes and How to Avoid Them

Diagram: 8. Common Caching Mistakes and How to Avoid Them

Diagram: 8. Common Caching Mistakes and How to Avoid Them

Caching is a powerful tool, but its incorrect application can lead to serious problems, from serving stale data to reduced performance. Here are 5 common mistakes and ways to prevent them:

8.1. Caching Personalized Content

Mistake: Attempting to cache pages containing user-specific data (e.g., shopping cart, personal account) without proper separation. This results in one user seeing another user's data.

How to avoid:

- For Varnish: Completely skip caching requests with session cookies (

unset req.http.Cookie; return (pass); in VCL) or use ESI (Edge Side Includes) to cache only common parts of the page. Never cache Authorization headers.

- For Redis: Always use unique keys for personalized data, including the user ID (e.g.,

user:123:profile).

Consequences: Confidential data leakage, privacy violation, incorrect application operation, legal risks (especially in the context of GDPR/CCPA).

8.2. Ineffective or Missing Cache Invalidation

Mistake: Data in the cache becomes stale, and users continue to see outdated information. Or, conversely, the cache is cleared too often, negating all caching benefits.

How to avoid:

- TTL (Time To Live): Set an adequate time-to-live for each cache object in Redis and Varnish (

SETEX for Redis, beresp.ttl for Varnish).

- Proactive invalidation: Upon any data change in the source (DB), send commands to clear the corresponding cache (

DEL for Redis, PURGE/BAN for Varnish). Integrate this into ORM hooks or services responsible for writing.

- Using Pub/Sub: For distributed systems, Redis Pub/Sub can notify all backends about the need to clear local cache or specific keys in Redis.

Consequences: Users see outdated data, leading to dissatisfaction, order errors, and incorrect reports. Low Cache Hit Ratio.

8.3. Caching Errors or Undesirable Content

Mistake: Varnish or Redis cache pages with errors (404, 500) or redirects (3xx), which are then served to all users.

How to avoid:

- In VCL: Explicitly specify which HTTP statuses can be cached (

if (beresp.status == 200) { set beresp.ttl = ... }). Do not cache 4xx, 5xx.

- In the application: Ensure that your Redis caching code does not store erroneous database query results.

- Health Checks: Configure

.probe in Varnish for the backend so that Varnish knows when the backend is unhealthy and can use grace or serve errors without caching.

Consequences: Users constantly see errors, even if the backend has already been fixed. Reduced trust in the service.

8.4. Insufficient Memory for Cache

Mistake: Allocating too little RAM for Redis or Varnish, leading to constant data eviction and a low cache hit ratio.

How to avoid:

- Monitoring: Regularly monitor memory usage metrics (

redis-cli INFO memory, varnishstat) and Cache Hit Ratio.

- Planning: Estimate the volume of data you plan to cache. For Varnish, this depends on page size and the expected number of unique URLs. For Redis, it depends on the volume of data you want to store.

- Eviction policies: For Redis, use appropriate

maxmemory-policy (e.g., allkeys-lru or volatile-lru).

- Upgrade: If necessary, consider upgrading your VPS/Dedicated server to a larger amount of RAM or moving Redis/Varnish to separate instances.

Consequences: The cache operates inefficiently; most requests still go to the backend/DB. CPU and I/O load increases, and overall performance decreases.

8.5. Lack of Caching Metrics Monitoring

Mistake: Implementing caching without subsequently tracking its effectiveness and impact on the system.

How to avoid:

- Tools: Use Prometheus/Grafana to collect and visualize

varnishstat, redis-cli INFO metrics, as well as your application's metrics (Cache Hit/Miss).

- Alerting: Configure alerts for a critical drop in Cache Hit Ratio, high memory consumption, or errors in the operation of caching systems.

- Regular analysis: Periodically analyze Varnish (

varnishlog) and Redis logs to identify inefficient caching rules or problematic requests.

Consequences: Inability to assess the real effect of caching, missing performance degradation, difficulties in debugging and optimization. You don't know if your caching system is working as it should.

9. Checklist for Practical Application

This step-by-step algorithm will help you implement and optimize caching strategies with Redis and Varnish.

- 1. Analyze current performance:

- Measure the current Time To First Byte (TTFB), Requests Per Second (RPS), and resource utilization (CPU, RAM, I/O) of your application without caching.

- Identify the slowest queries and most frequently requested data/pages.

- Use tools like Apache JMeter, k6, or Locust for load testing.

- 2. Plan caching architecture:

- Determine which data will be cached in Redis (sessions, DB objects, counters) and which in Varnish (HTTP responses, static files, public APIs).

- Design an invalidation strategy for each data type.

- Estimate the required RAM for Redis and Varnish.

- 3. Prepare the server:

- Ensure that your VPS/Dedicated server has enough RAM and CPU to run Redis and Varnish, in addition to your application and database.

- Configure the firewall (UFW, iptables) to restrict access to Redis (6379) and Varnish (80, 6081) ports only from necessary IP addresses.

- 4. Install and basic configure Redis:

- Install

redis-server.

- Edit

/etc/redis/redis.conf: set maxmemory, maxmemory-policy, requirepass, bind.

- Restart Redis and ensure it is running.

- 5. Integrate Redis into the application:

- Install the Redis client library for your language (

redis for Python, ioredis for Node.js).

- Implement caching for sessions, database query results, and counters.

- Implement cache invalidation mechanisms (TTL, proactive clearing upon data changes).

- 6. Install and basic configure Varnish:

- Install

varnish.

- Configure Varnish to listen on port 80 by modifying the system unit or

/etc/default/varnish.

- Edit

/etc/varnish/default.vcl: define the backend, basic caching rules for vcl_recv and vcl_backend_response, and PURGE/BAN handling.

- Restart Varnish.

- 7. Configure Nginx as an SSL terminator in front of Varnish:

- Modify Nginx configuration to listen on port 443 (HTTPS) and proxy traffic to Varnish (port 80) over HTTP.

- Ensure Nginx passes the correct

X-Real-IP and X-Forwarded-For headers.

- Restart Nginx.

- 8. Test caching:

- Verify that Varnish caches pages (

X-Cache: HIT header).

- Verify that Redis is used by the application (

redis-cli INFO keyspace_hits).

- Perform load testing with caching enabled and compare the results with initial metrics.

- Ensure that cache invalidation works correctly.

- 9. Configure monitoring and alerting:

- Install Prometheus and Grafana.

- Configure exporters for Redis (

redis_exporter) and Varnish (varnish_exporter).

- Create dashboards to track Cache Hit Ratio, memory usage, CPU, RPS.

- Configure alerts for critical metrics (e.g., low Hit Ratio, memory overflow).

- 10. Continuous optimization:

- Regularly analyze Varnish (

varnishlog) and Redis logs.

- Optimize VCL rules and application caching logic based on real data.

- Consider using ESI for more granular caching.

- Monitor Redis and Varnish updates, use current versions.

10. Cost Calculation / Caching Economics

Diagram: 10. Cost Calculation / Caching Economics

Diagram: 10. Cost Calculation / Caching Economics

Implementing Redis and Varnish on VPS/Dedicated servers, while requiring initial effort, brings significant long-term savings. By 2026, the cost of cloud resources continues to rise, making optimization on self-hosted servers even more attractive. The main idea: caching allows you to "squeeze" more performance out of fewer or less powerful servers.

10.1. Direct Savings

- Reduced CPU demand: Fewer requests reach the backend and database, which reduces CPU load. This allows for the use of less powerful (and cheaper) VPS or defers upgrading to the next tariff plan.

- Reduced RAM demand: Although Redis and Varnish themselves consume RAM, they allow the DB and application to operate with less data in memory, as many requests are served by the cache.

- Reduced I/O operations: Fewer disk requests (DB) mean less load on the disk subsystem, which is critical for SSD performance and longevity.

- Reduced network traffic (for external DBs/APIs): If Redis caches responses from external services or a remote DB, the volume of outbound/inbound traffic decreases, which can reduce data transfer bills.

10.2. Indirect Savings and Benefits

- Improved user experience: A fast website = happy users = higher conversion = more revenue.

- Improved SEO: Google and other search engines prefer fast websites, which improves search rankings.

- Reduced downtime risks: Varnish with graceful degradation can serve old content even if the backend is down. Redis Sentinel ensures high availability for cached data.

- Simplified scaling: Backend servers become "lighter," making them easier to scale horizontally by adding new instances.

- Reduced support costs: Less loaded servers operate more stably, require fewer interventions, and recover faster from failures.

10.3. Hidden Costs

- Developer/DevOps time: Initial setup and integration require time and expertise. This is the most significant "hidden" cost.

- Monitoring: Implementing and maintaining a monitoring system (Prometheus, Grafana) also requires resources.

- Architectural complexity: Adding new components increases system complexity, which can make debugging more difficult.

- "Cold start" of the cache: After restarting Varnish or Redis, the cache is empty, which temporarily increases the load on the backend until the cache is populated.

10.4. Calculation Examples for Different Scenarios (2026)

Assume the cost of VPS/Dedicated servers (CPU, RAM, Disk) in 2026 is as follows:

- Basic VPS (2 vCPU, 4 GB RAM, 80 GB SSD): $25/month

- Medium VPS (4 vCPU, 8 GB RAM, 160 GB SSD): $50/month

- Powerful VPS (8 vCPU, 16 GB RAM, 320 GB SSD): $100/month

- Dedicated Server (16 vCPU, 32 GB RAM, 1 TB SSD): $300/month

Scenario 1: Small SaaS Project (up to 1000 RPS)

Initially: One medium VPS ($50/month) with Nginx + Backend + PostgreSQL. Load reaches 80% CPU.

Without caching:

- Performance: ~1000 RPS

- Cost: $50/month

- Risk: If traffic grows to 1200 RPS, an upgrade to a powerful VPS ($100/month) will be required.

With caching (Varnish + Redis on the same VPS):

- Additional costs: 1-2 days of DevOps work (e.g., $500).

- Result: Backend load reduced by 60%, CPU drops to 30%.

- Performance: The same medium VPS can now handle up to 2500 RPS.

- Cost: Still $50/month.

- Savings: $50/month (upgrade deferral) + improved UX/SEO. Payback in 10 months.

Scenario 2: Medium E-commerce Store (up to 5000 RPS)

Initially: Two powerful VPS ($100/month each, total $200/month) with a load balancer, Nginx + Backend + PostgreSQL (on a separate VPS). Backend load 70-85% CPU.

Without caching:

- Performance: ~5000 RPS

- Cost: $200/month (2x powerful VPS) + $50 (PostgreSQL VPS) = $250/month.

- Risk: If traffic grows to 7000 RPS, a third powerful VPS (+$100/month) will be required.

With caching (Varnish on frontend, Redis on backends):

- Additional costs: 3-5 days of DevOps work (e.g., $1000).

- Architecture:

- 1 powerful VPS for Varnish (can be combined with Nginx).

- 2 medium VPS for the backend (with Redis).

- 1 medium VPS for PostgreSQL.

- Result: Varnish caches 80% of requests, Redis reduces DB load by 70%.

- Performance: The overall system easily handles 10000+ RPS.

- Cost: $100 (Varnish) + $100 (2x medium VPS) + $50 (PostgreSQL) = $250/month.

- Savings: Possibly not direct monetary savings, but significantly increased resilience and scalability without increasing current costs. Upgrade deferred for a much longer period.

Table with calculation examples (simplified):

| Parameter |

Without Caching |

With Caching (Varnish+Redis) |

Benefit/Savings |

| Required number of powerful VPS |

2 |

1 (for Varnish) + 2 medium (for backend) |

Reduced reliance on expensive powerful servers |

| Total VPS cost/month |

$200 (2x powerful) |

$200 (1x powerful + 2x medium) |

Same budget, but significantly higher RPS/resilience |

| Max. RPS (approx.) |

5000 |

10000+ |

2x or more increase in throughput |

| % CPU on backend |

70-85% |

20-40% |

Significant load reduction, more headroom |

| Return on Investment (DevOps time) |

N/A |

3-6 months (due to upgrade deferral and revenue growth) |

Fast return on expertise investment |

As seen from the examples, investments in caching on VPS/Dedicated servers pay off very quickly, especially as a project grows. This is not just technical optimization, but a strategic business decision.

11. Case Studies and Real-World Examples

Diagram: 11. Case Studies and Real-World Examples

Diagram: 11. Case Studies and Real-World Examples

Personal experience and project observations show that the combination of Redis and Varnish is one of the most effective tools for improving web application performance and stability. Here are a few realistic scenarios.

11.1. Case 1: High-Load News Portal on Python/Django

Problem: A news portal with millions of views per month, running on Django, experienced serious performance issues during peak loads (e.g., when breaking news was released). Average response time reached 800-1200 ms, backend servers (multiple VPS) constantly ran at 90%+ CPU, and the database (PostgreSQL) was overloaded with requests. 502/504 errors were frequently observed.

Solution:

- Varnish Cache on the frontend: Varnish was installed in front of each Nginx (which terminated SSL). VCL was configured to cache all news pages, category pages, and static files with TTLs ranging from 5 minutes to 1 hour. A system of PURGE requests from the Django admin panel was implemented upon news publication or update.

- Redis for Django object caching: Redis Cache Backend was configured in Django to cache the results of complex DB queries (e.g., lists of popular news, comments, aggregated data), as well as to store user sessions. TTL for this data varied from 1 to 15 minutes.

- Graceful Degradation: A

grace policy was configured in Varnish, allowing it to serve stale content from the cache if the backend was unresponsive, which significantly increased fault tolerance during deployments or temporary failures.

Results:

- Response time: Average response time for cached pages decreased to 50-100 ms. Even for uncached pages, it reduced to 300-400 ms due to reduced DB load.

- CPU load: Load on backend servers dropped to 20-40% during normal times and to 60-70% during peak moments.

- Cache Hit Ratio: Varnish achieved 85-90% Cache Hit Ratio for news pages, Redis — 70-80% for object cache.

- Savings: The need for an urgent server upgrade was eliminated. In fact, the purchase of more powerful Dedicated servers was postponed for a year and a half, saving tens of thousands of dollars.

- Stability: The number of 5xx errors decreased to almost zero, and the site became significantly more resilient to peak loads.

11.2. Case 2: SaaS Platform for Project Management on Node.js/Express

Problem: A SaaS platform actively using an API for frontend (React) interaction with the backend (Node.js/Express). Although most data was personalized, some API endpoints (project lists, users, directories) were public or partially public in nature. Load on API servers and MongoDB was high, especially when loading dashboards with many widgets.

Solution:

- Varnish for public and partially public APIs: Varnish was configured in front of Nginx (which terminated SSL). Strict caching rules were defined in VCL for API endpoints returning reference data or lists that are not updated frequently. For authorized requests, Varnish passed them through, but for public or project ID/user-based cached requests (using ESI), it was actively used.

- Redis for sessions and object caching: Redis was used to store all user sessions, which allowed for easy scaling of Node.js servers. Additionally, Redis cached the results of complex aggregations from MongoDB (e.g., monthly project statistics), which were expensive to recompute.

- Pub/Sub for invalidation: When key data changed (e.g., project status, adding a new user), the Node.js server published an event to Redis Pub/Sub. Other Node.js servers subscribed to this channel received a notification and invalidated corresponding keys in Redis or sent PURGE requests to Varnish.

Results:

- API Performance: Response time for cached API requests decreased from 200-500 ms to 10-30 ms. Overall dashboard performance significantly improved.

- MongoDB Load: The number of requests to MongoDB decreased by 40-50%, which avoided costly horizontal database scaling.

- Backend Scalability: Thanks to centralized sessions in Redis, adding new Node.js servers became a trivial task.

- Resilience: The system became more resilient to "flash mobs" when a large number of users simultaneously accessed the platform.

These cases demonstrate that Redis and Varnish, when properly integrated, can solve a wide range of performance and scalability problems, while ensuring high availability and resource savings.

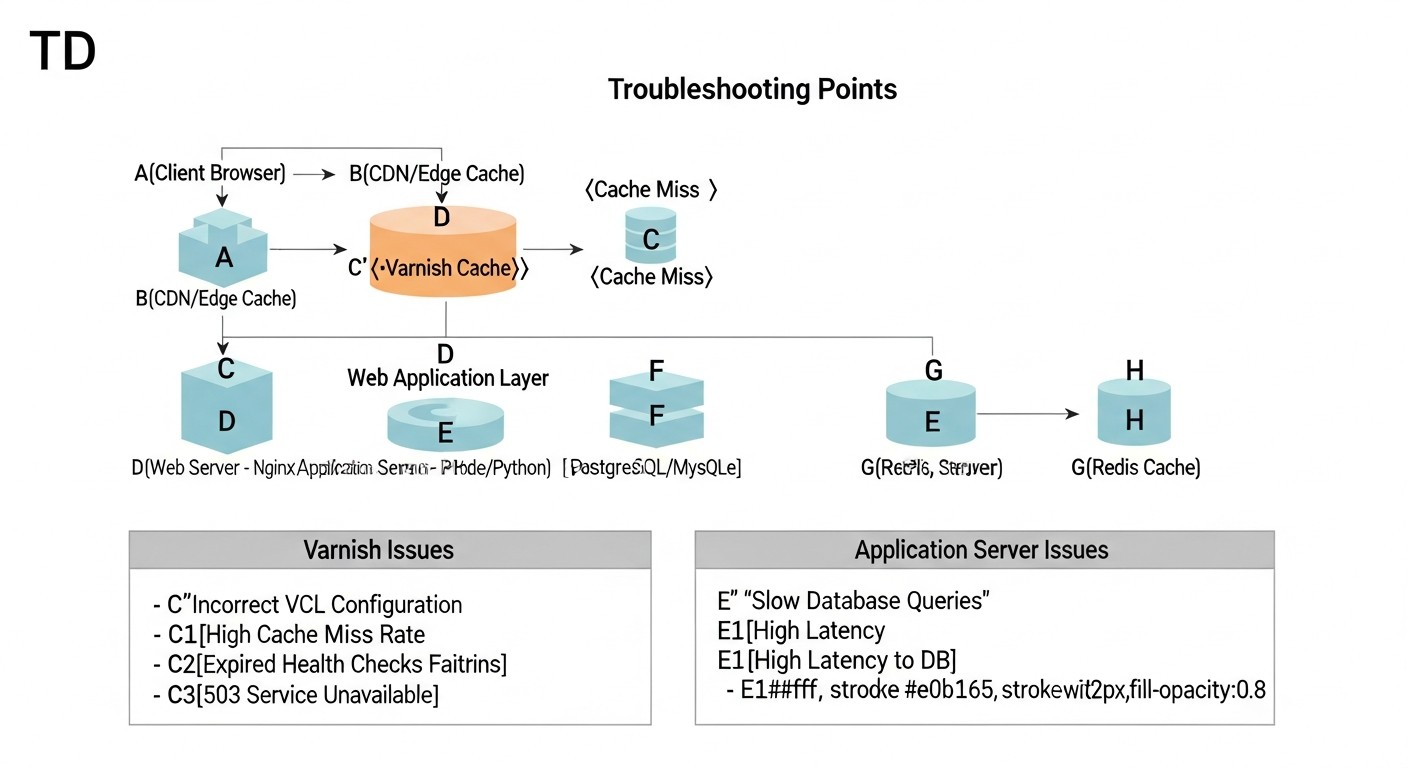

13. Troubleshooting: Problem Solving

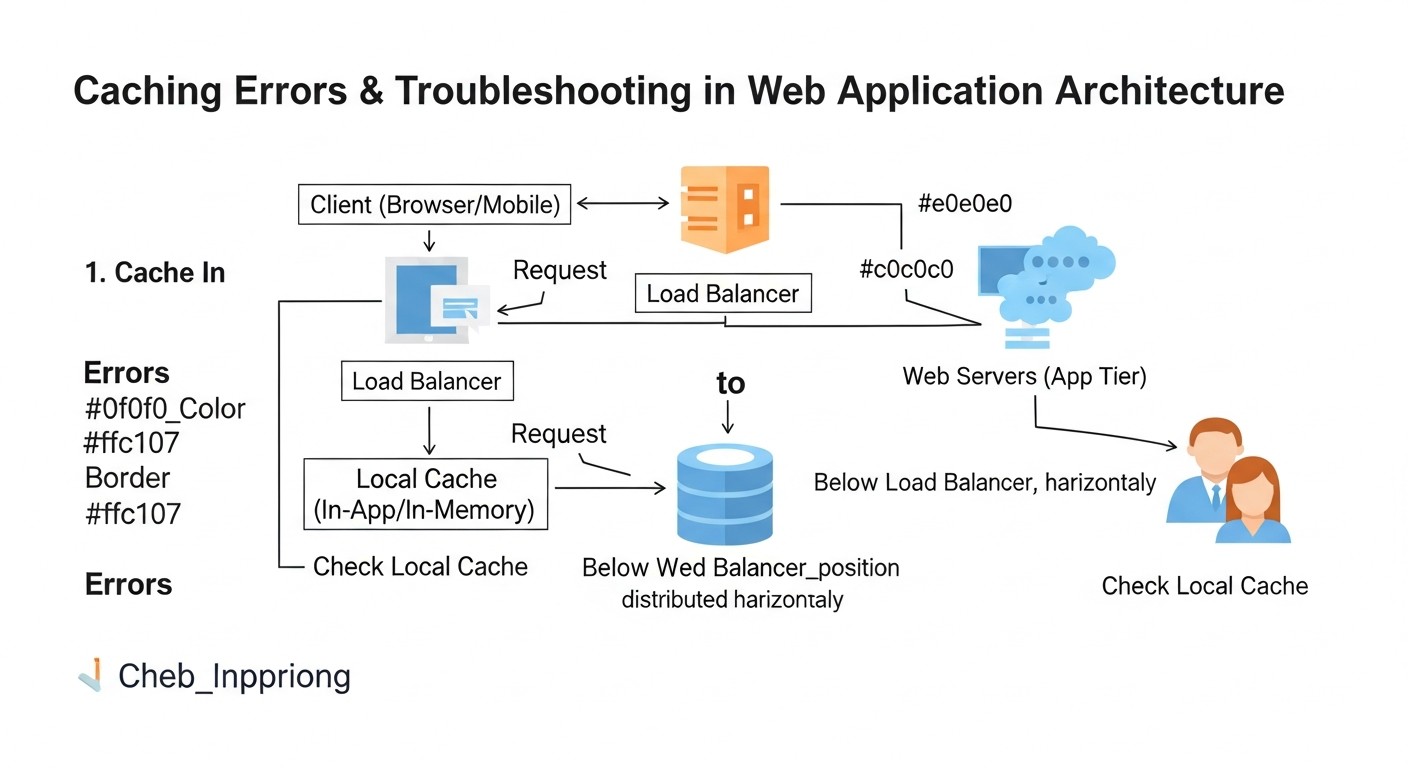

Diagram: 13. Troubleshooting: Problem Solving

Diagram: 13. Troubleshooting: Problem Solving

Implementing and maintaining caching systems is not without its challenges. Here are typical scenarios and approaches to solving them.

13.1. Varnish Does Not Cache Content (Always MISS)

- Reason 1:

Cache-Control or Set-Cookie Headers. Your backend might send Cache-Control: private, no-cache, no-store, or Set-Cookie, which by default prevents Varnish from caching.

Solution:

- Check backend response headers using

curl -I http://127.0.0.1:8000/ (where 8000 is your backend's port).

- In VCL (

vcl_backend_response), explicitly remove Set-Cookie if it's not needed for cached pages: unset beresp.http.Set-Cookie;.

- Override

Cache-Control if the backend returns incorrect values: set beresp.http.Cache-Control = "public, max-age=300";.

- Reason 2: Incorrect HTTP Methods. Varnish by default only caches

GET and HEAD requests.

Solution: Ensure you are trying to cache GET/HEAD requests. For POST and other methods, use return (pass); in vcl_recv.

- Reason 3: Backend Issues. Varnish might not cache if the backend consistently returns errors (5xx).

Solution: Check backend logs, ensure it is stable and returns 200 OK. Configure .probe in VCL for the backend.

- Reason 4: Missing TTL.

beresp.ttl is not set in VCL or is too small.

Solution: In vcl_backend_response, set an adequate set beresp.ttl = 5m;.

13.2. Varnish Serves Stale Content

- Reason 1: Incorrect Invalidation. You are not sending PURGE/BAN requests, or they are configured incorrectly.

Solution:

- Check Varnish logs (

varnishlog) for PURGE/BAN requests and their successful execution.

- Ensure that the IP address from which PURGE/BAN requests are sent is allowed in VCL.

- Verify that regular expressions in BAN requests are correct.

- Reason 2: TTL is Too High. Cache lifetime is too long for frequently changing content.

Solution: Reduce beresp.ttl for this type of content.

13.3. High RAM Consumption by Redis / Varnish

- Reason 1: Insufficient

maxmemory (for Redis) or -s malloc (for Varnish). Cache grows, but there is no space, leading to data eviction.

Solution:

- Redis: Increase the

maxmemory value in redis.conf.

- Varnish: Increase the

-s malloc,2G value (e.g., to 4G) in Varnish startup parameters.

- Monitoring: Use

redis-cli INFO memory and varnishstat to track memory consumption.

- Reason 2: Ineffective Eviction Policies (for Redis).

Solution: Ensure that maxmemory-policy is set to allkeys-lru or volatile-lru for the cache.

- Reason 3: Storing Overly Large Objects.

Solution: Re-evaluate what you are caching. Perhaps some very large objects should not be cached entirely.

13.4. Redis Connection Errors from the Application

- Reason 1: Incorrect IP/Port/Password.

Solution:

- Check

bind and port in redis.conf.

- Ensure the application uses the correct connection details.

- Check

requirepass in redis.conf and the corresponding password in the application.

- Reason 2: Firewall Blocking Port.

Solution: Check firewall rules (sudo ufw status or sudo iptables -L). Allow access to port 6379 from your application's IP address.

- Reason 3: Redis Not Running.

Solution: sudo systemctl status redis-server. If not running, sudo systemctl start redis-server and check logs /var/log/redis/redis-server.log.

13.5. When to Contact Support

- OS Issues: If the server constantly crashes, or system errors occur that are not directly related to Redis/Varnish.

- Network Issues: If there are suspicions of network card or routing problems at the hosting level.

- Unexplained Failures: If Redis or Varnish logs indicate internal errors that you cannot interpret after reviewing the documentation.

- Licensed Versions: If you are using a commercial version of Varnish Enterprise or Redis Enterprise, feel free to contact their support for any unclear issues.

Always start by checking logs (journalctl -u varnish, journalctl -u redis-server, varnishlog) and metrics (varnishstat, redis-cli INFO). In most cases, this allows for quick problem localization and resolution.

14. FAQ: Frequently Asked Questions

14.1. Can I use only Redis or only Varnish?

Yes, you can. Redis is excellent for caching application data (sessions, DB objects), while Varnish is for caching HTTP responses and static content. However, maximum performance and flexibility are achieved when they are used together, as they complement each other, creating a multi-layered caching system. Redis works closer to the application, Varnish — closer to the user.

14.2. How does Varnish handle HTTPS traffic?

Varnish itself cannot terminate SSL/TLS. To work with HTTPS, an SSL-terminating proxy, such as Nginx or HAProxy, must always be placed in front of Varnish. Nginx receives the HTTPS request from the client, decrypts it, and then passes a regular HTTP request to Varnish. Varnish processes it and returns an HTTP response back to Nginx, which encrypts it again and sends it to the client.

14.3. Which memory eviction policy should I choose for Redis?

For caching, the most popular policies are allkeys-lru (Least Recently Used) or volatile-lru. allkeys-lru evicts the least recently used keys from the entire dataset, while volatile-lru evicts only those keys that have a TTL set. If Redis is used exclusively as a cache, allkeys-lru is often a good choice. It is important to set maxmemory so that Redis does not consume all available RAM.

14.4. How to ensure high availability for Redis?

For Redis high availability, use Redis Sentinel. Sentinel is a monitoring system that watches over the Redis master instance and automatically switches to a replica in case of a master failure. For horizontal scaling and sharding, use Redis Cluster, which distributes data across multiple nodes and provides fault tolerance at the cluster level.

14.5. Can API requests be cached with Varnish?

Yes, Varnish is excellent for caching API requests, especially if they return non-personalized data or data that changes infrequently (e.g., directories, public news feeds). It is important to carefully configure VCL to avoid caching personalized or sensitive data, and to ensure adequate cache invalidation when data changes at the source.

14.6. How to avoid Varnish cache "cold start" after a restart?

After a Varnish restart, its cache is empty. To minimize the "cold start" effect, you can use:

- Warm-up scripts: Scripts that automatically "crawl" the most popular URLs after a Varnish restart, filling the cache.

- Graceful Restart: Varnish supports "graceful restart," allowing a new process to fill the cache while the old one continues to serve requests.

- Persistent Varnish: Although Varnish is inherently non-persistent, experimental or commercial solutions exist that allow saving the cache to disk, but this adds complexity.

14.7. How secure is it to store sessions in Redis?

Storing sessions in Redis is secure when the following measures are observed:

- Password: Always use

requirepass.

- Firewall: Restrict access to the Redis port (6379) only from your application's IP addresses.

- SSL/TLS: If Redis is on a separate server, use stunnel or VPN to encrypt traffic between the application and Redis.

- Isolation: Do not use the same Redis instance for storing critical data and less important caches.

14.8. Can Redis and Varnish be on the same server as the application and DB?

For small and medium-sized projects on a VPS, this is a common and cost-effective practice. However, if the project grows, it is recommended to move Redis and/or Varnish to separate, specialized VPS/Dedicated servers. This improves isolation, security, and simplifies the independent scaling of each component. The main thing is to monitor RAM and CPU consumption.

14.9. How to debug VCL configuration?

Use varnishlog with filters to see how Varnish processes each request and why it makes certain decisions (HIT/MISS/PASS). It is also useful to add your own debug messages to VCL using std.log("My Debug Message: " + req.url);. vcl_lint will help check VCL syntax before loading.

14.10. What are the alternatives to Redis and Varnish?

For Redis: Memcached (simpler, but less functionality), Tarantool (high-performance, with SQL and Lua scripts), KeyDB (a Redis fork with multi-threading).

For Varnish: Nginx (with caching module), Apache Traffic Server, Cloudflare (CDN, but not on VPS/Dedicated), Akamai (CDN). However, for VPS/Dedicated and maximum control, Varnish remains one of the best solutions for HTTP caching.

15. Conclusion

In 2026, as demands for web application speed and responsiveness reach unprecedented levels, effective caching is no longer an option but a mandatory element of any high-performance infrastructure. The combination of Redis and Varnish on VPS/Dedicated servers represents a powerful and cost-effective solution capable of significantly improving user experience, reducing operational costs, and enhancing the overall stability of your services.

We have examined how Redis, with its multifunctional in-memory data store, optimizes backend operations by caching database query results, managing sessions, and providing fast access to temporary data. At the same time, Varnish Cache, acting as a high-performance HTTP accelerator, offloads the main burden from the web server and application by instantly serving static and frequently requested dynamic content.

The key to success lies not only in installing these tools but also in their proper configuration, deep integration with the application, and, most importantly, in developing well-thought-out cache invalidation strategies. Without accurate and timely cache clearing, all speed advantages can be negated by serving outdated data, which will damage the reputation and functionality of your service.

Continuous monitoring is an equally important aspect. Tools like Prometheus and Grafana allow real-time tracking of caching efficiency, identifying bottlenecks, and making informed decisions for further optimization. Remember that performance is not a one-time task but an ongoing process of improvement.

Next steps for the reader:

- Conduct an audit: Evaluate your application's current performance, identify the slowest sections and data that can be cached.

- Start small: Implement caching incrementally. Begin with Redis for sessions or Varnish for static content, then expand functionality.

- Test: Every change in cache configuration should be accompanied by load testing to confirm a positive effect.

- Monitor: Install and configure a monitoring system to track key caching metrics.

- Learn: A deep understanding of VCL for Varnish and all Redis capabilities will allow you to create truly flexible and high-performance solutions.

Ultimately, mastering caching strategies with Redis and Varnish will not only transform your web application into a lightning-fast service but also give you a competitive edge in the 2026 market, ensuring the stability and scalability of your business.

¿Te fue útil esta guía?

Web Application Performance Optimization: Caching Strategies with Redis and Varnish on VPS/Dedicated